AIC’s Grays Peak Server with Intel ‘EDSFF’ Ruler SSDs

by Ian Cutress on June 25, 2018 11:00 AM EST- Posted in

- SSDs

- Intel

- Trade Shows

- Enterprise SSDs

- AIC

- Computex 2018

- EDSFF

- 1 PB

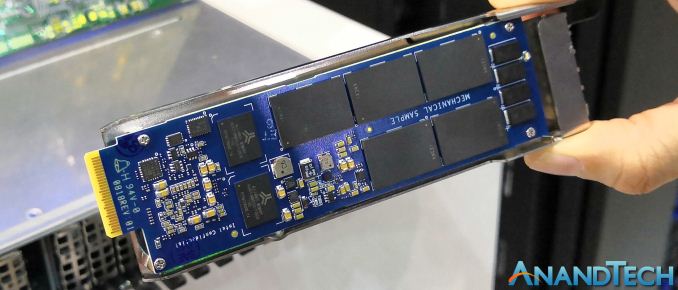

In the consumer space, we get SATA drives, mSATA drives, M.2 drives, and for the high end, U.2 drives. By contrast, the enterprise space is expanding: U.2 is a lot more prevalent than M.2, Samsung’s NF1 drives are now coming into the market, but also Intel has been discussing its new ‘ruler’ form factor to put more storage into a single server. At Computex, AIC and Intel showcased the new ‘Grays Peak’ FB128-LX platform designed for high-density flash storage using this new ruler SSD.

The ruler specification is based on Intel’s new ‘Enterprise and Datacenter SSD Form Factor’, known as EDSFF, which can enable each drive to have a PCIe 3.0 x4 or a PCIe 3.0 x8 connection to the system. The Grays Peak server in this instance uses a dual-socket Xeon combined with 36 of the new ruler SSDs, with the top variants aiming to provide 1 PB of storage into a 1U chassis by taking advantage of increased SSD length and optimal thermal environments. Current capacity puts 576 TB into 1U, giving 16 TB per drive.

Obviously having 36 drives, even with a x4 connection (such as the mechanical sample on display), equates to 36x4 = 144 PCIe lanes, more than a dual socket server can handle, so managing in the middle are a pair of PLX 8000-series PCIe switches. The demo PCB above shows the bump layouts for them and we confirmed that the system is using 8000-series and not the newer 9000-series. The number of PCIe lanes from each CPU will be even more important in the future as the drives move up to x8 connection speeds. AIC also states that the drives are hot-swappable.

We expect that Intel will pair with other OEMs for other Grays Peak type platforms in the near future as it attempts to expand its new form factor in enterprise systems.

| Want to keep up to date with all of our Computex 2018 Coverage? | ||||||

Laptops |

Hardware |

Chips |

||||

| Follow AnandTech's breaking news here! | ||||||

7 Comments

View All Comments

RU482 - Monday, June 25, 2018 - link

Are EDSFF (Intel) and NF1/NGSFF (Samsung) competing form factors for this type of server storage? From the pictures, they are physically different, with the Samsung solution being more M.2 compatible with the connector interface centered on the PCB, while the Intel solution seems more "designed for 1U servers" and associated thermal challenges, but also has an offset connector.The confusing part (for me at least) is that both companies are listed as EDSFF consortium members....so, hey, why not pick a standard form factor and then produce the product?

Kristian Vättö - Monday, June 25, 2018 - link

Ruler and NF1 are competing standards. Ruler is being standardised at SNIA, whereas NF1 is at JEDEC. Both are designed for 1U servers and enable max 576TB in 1U using 16TB SSDs (Intel's "long" ruler could enable twice that, if/when it comes to market). NF1 uses M.2 connector to lower enabling cost, while ruler uses a new connector, which is a bit more versatile (supports up to x8.Samsung is a member of SNIA and EDSFF board, but it doesn't mean that Samsung is a supporter of ruler spec. Similarly, Intel is a JEDEC member but they are not supporting NF1.

The_Assimilator - Tuesday, June 26, 2018 - link

tl;dr Ruler will win because of its higher bandwidth.close - Tuesday, June 26, 2018 - link

Firewire is shedding a tear reading your comment :).The_Assimilator - Tuesday, June 26, 2018 - link

Firewire's mistake was that it tried to compete in the consumer market, where cheap always wins over fast - hence USB. In the server market, speed and form factor are worth more, ergo Ruler should win - assuming Intel isn't stupid enough to tie it to only their chipsets, or charge a licensing fee for using it, or some such other greedy idiocy.Kristian Vättö - Wednesday, June 27, 2018 - link

Bandwidth of a single drive is meaningless when you have 36 drives sitting behind two CPUs that don't even have 4 lanes to give to each drive. Let alone trying to get all that bandwidth through a network... Even if that problem is solved, next you would run into thermal issues because x8 means twice the power and 36 drives pulling over 20W each is not realistic to cool with the limited airflow between the drives.tl;dr Ruler merely adds complexity to server designs and supply chain management by introducing too many options with very limited viability in the real world.

stifan - Tuesday, July 3, 2018 - link

Thanks for this great post. This is really helpful for me. Also, seehttps://epsxeapp.wordpress.com/