The AMD Trinity Review (A10-4600M): A New Hope

by Jarred Walton on May 15, 2012 12:00 AM ESTIntroduction and Piledriver Overview

Brazos and Llano were both immensely successful parts for AMD. The company sold tons despite not delivering leading x86 performance. The success of these two APUs gave AMD a lot of internal confidence that it was possible to build something that didn't prioritize x86 performance but rather delivered a good balance of CPU and GPU performance.

AMD's commitment to the world was that we'd see annual updates to all of its product lines. Llano debuted last June, and today AMD gives us its successor: Trinity.

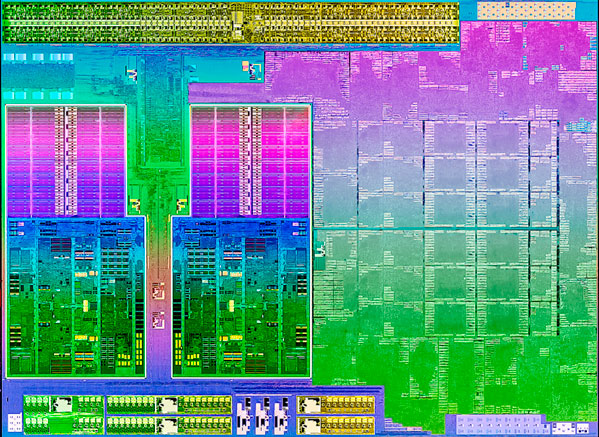

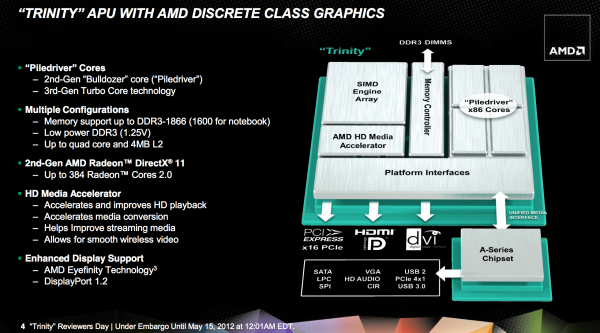

At a high level, Trinity combines 2-4 Piledriver x86 cores (1-2 Piledriver modules) with up to 384 VLIW4 Northern Islands generation Radeon cores on a single 32nm SOI die. The result is a 1.303B transistor chip (up from 1.178B in Llano) that measures 246mm^2 (compared to 228mm^2 in Llano).

| Trinity Physical Comparison | |||||

| Manufacturing Process | Die Size | Transistor Count | |||

| AMD Llano | 32nm | 228mm2 | 1.178B | ||

| AMD Trinity | 32nm | 246mm2 | 1.303B | ||

| Intel Sandy Bridge (4C) | 32nm | 216mm2 | 1.16B | ||

| Intel Ivy Bridge (4C) | 22nm | 160mm2 | 1.4B | ||

Without a change in manufacturing process, AMD is faced with the tough job of increasing performance without ballooning die size. Die size has only gone up by around 7%, but both CPU and GPU performance see double-digit increases over Llano. Power consumption is also improved over Llano, making Trinity a win across the board for AMD compared to its predecessor. If you liked Llano, you'll love Trinity.

The problem is what happens when you step outside of AMD's world. Llano had a difficult time competing with Sandy Bridge outside of GPU workloads. AMD's hope with Trinity is that its hardware improvements combined with more available OpenCL accelerated software will improve its standing vs. Ivy Bridge.

Piledriver: Bulldozer Tuned

While Llano featured as many as four 32nm x86 Stars cores, Trinity features up to two Piledriver modules. Given the not-so-great reception of Bulldozer late last year, we were worried about how a Bulldozer derivative would stack up in Trinity. I'm happy to say that Piledriver is a step forward from the CPU cores used in Llano, largely thanks to a bunch of clean up work from the Bulldozer foundation.

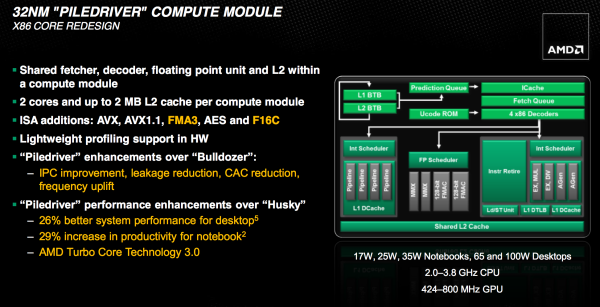

Piledriver picks up where Bulldozer left off. Its fundamental architecture remains completely unchanged, but rather improved in all areas. Piledriver is very much a second pass on the Bulldozer architecture, tidying everything up, capitalizing on low hanging fruit and significantly improving power efficiency. If you were hoping for an architectural reset with Piledriver, you will be disappointed. AMD is committed to Bulldozer and that's quite obvious if you look at Piledriver's high level block diagram:

Each Piledriver module is the same 2+1 INT/FP combination that we saw in Bulldozer. You get two integer cores, each with their own schedulers, L1 data caches, and execution units. Between the two is a shared floating point core that can handle instructions from one of two threads at a time. The single FP core shares the data caches of the dual integer cores.

Each module appears to the OS as two cores, however you don't have as many resources as you would from two traditional AMD cores. This table from our Bulldozer review highlights part of problem when looking at the front end:

| Front End Comparison | |||||

| AMD Phenom II | AMD FX | Intel Core i7 | |||

| Instruction Decode Width | 3-wide | 4-wide | 4-wide | ||

| Single Core Peak Decode Rate | 3 instructions | 4 instructions | 4 instructions | ||

| Dual Core Peak Decode Rate | 6 instructions | 4 instructions | 8 instructions | ||

| Quad Core Peak Decode Rate | 12 instructions | 8 instructions | 16 instructions | ||

| Six/Eight Core Peak Decode Rate | 18 instructions (6C) | 16 instructions | 24 instructions (6C) | ||

It's rare that you get anywhere near peak hardware utilization, so don't be too put off by these deltas, but it is a tradeoff that AMD made throughout Bulldozer. In general, AMD opted for better utilization of fewer resources (partially through increasing some data structures and other elements that feed execution units) vs. simply throwing more transistors at the problem. AMD also opted to reduce the ratio of integer to FP resources within the x86 portion of its architecture, clearly to support a move to the APU world where the GPU can be a provider of a significant amount of FP support. Piledriver doesn't fundamentally change any of these balances. The pipeline depth remains unchanged, as does the focus on pursuing higher frequencies.

Fundamental to Piledriver is a significant switch in the type of flip-flops used throughout the design. Flip-flops, or flops as they are commonly called, are simple pieces of logic that store some form of data or state. In a microprocessor they can be found in many places, including the start and end of a pipeline stage. Work is done prior to a flop and committed at the flop or array of flops. The output of these flops becomes the input to the next array of logic. Normally flops are hard edge elements—data is latched at the rising edge of the clock.

In very high frequency designs however, there can be a considerable amount of variability or jitter in the clock. You either have to spend a lot of time ensuring that your design can account for this jitter, or you can incorporate logic that's more tolerant of jitter. The former requires more effort, while the latter burns more power. Bulldozer opted for the latter.

In order to get Bulldozer to market as quickly as possible, after far too many delays, AMD opted to use soft edge flops quite often in the design. Soft edge flops are the opposite of their harder counterparts; they are designed to allow the clock signal to spill over the clock edge while still functioning. Piledriver on the other hand was the result of a systematic effort to swap in smaller, hard edge flops where there was timing margin in the design. The result is a tangible reduction in power consumption. Across the board there's a 10% reduction in dynamic power consumption compared to Bulldozer, and some workloads are apparently even pushing a 20% reduction in active power. Given Piledriver's role in Trinity, as a mostly mobile-focused product, this power reduction was well worth the effort.

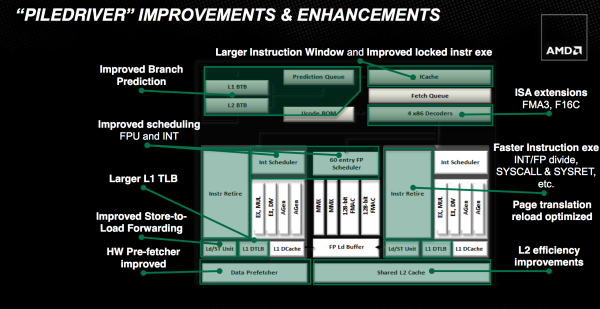

At the front end, AMD put in additional work to improve IPC. The schedulers are now more aggressive about freeing up tokens. Similar to the soft vs. hard flip flop debate, it's always easier to be conservative when you retire an instruction from a queue. It eases verification as you don't have to be as concerned about conditions where you might accidentally overwrite an instruction too early. With the major effort of getting a brand new architecture off of the ground behind them, Piledriver's engineers could focus on greater refinement in the schedulers. The structures didn't get any bigger; AMD just now makes better use of them.

The execution units are also a bit beefier in Piledriver, but not by much. AMD claims significant improvements in floating point and integer divides, calls and returns. For client workloads these gains show minimal (sub 1%) improvements.

Prefetching and branch prediction are both significantly improved with Piledriver. Bulldozer did a simple sequential prefetch, while Piledriver can prefetch variable lengths of data and across page boundaries in the L1 (mainly a server workload benefit). In Bulldozer, if prefetched data wasn't used (incorrectly prefetched) it would clog up the cache as it would come in as the most recently accessed data. However if prefetched data isn't immediately used, it's likely it will never be used. Piledriver now immediately tags unused prefetched data as least-recently-used, allowing the cache controller to quickly evict it if the prefetch was incorrect.

Another change is that Piledriver includes a perceptron branch predictor that supplements the primary branch predictor in Bulldozer. The perceptron algorithm is a history based predictor that's better suited for predicting certain branches. It works in parallel with the old predictor and simply tags branches that it is known to be good at predicting. If the old predictor and the perceptron predictor disagree on a tagged branch, the perceptron's path is taken. Improving branch prediction accuracy is a challenge, but it's necessary in highly pipelined designs. These sorts of secondary predictors are a must as there's no one-size-fits-all when it comes to branch prediction.

Finally, Piledriver also adds new instructions to better align its ISA with Haswell: FMA3 and F16C.

271 Comments

View All Comments

codedivine - Tuesday, May 15, 2012 - link

Does the GPU support fp64?JarredWalton - Tuesday, May 15, 2012 - link

I would assume so, though like most consumer GPUs it's going to be 1/16 FP32 performance or something similarly dire. If you have a quick test I could run, I'll be happy to report back.codedivine - Tuesday, May 15, 2012 - link

Thanks. Can you post the relevant output from "clinfo.exe"? This utility should either be part of new Catalyst releases, or you can alternately install AMD's APP SDK and it is a prebuilt utility. This will list a lot things, including extensions supported in OpenCL. It will show two devices available: one for CPU and one for GPU. If the GPU side lists cl_khr_fp64 (or the less-compliant cl_amd_fp64) then it supports FP64 in OpenCL.Not sure about how to test the rate.

JarredWalton - Tuesday, May 15, 2012 - link

Both cl_khr_fp64 and cl_amd_fp64 are listed as supported on both the CPU and GPU. Full CLinfo output is available here:http://images.anandtech.com/reviews/mobile/Trinity...

codedivine - Tuesday, May 15, 2012 - link

That is great! Your help is greatly appreciated!So I guess I am buying a Trinity then, as it will simplify my OpenCL development workflow.

Sidenote: I have some OpenCL code under development (as part of my grad research) that might be useful for you for benchmarking purposes for as well. Will get back to you about that in a few weeks.

JarredWalton - Tuesday, May 15, 2012 - link

Cool -- I'd love to see more OpenCL benchmarks, especially if they're actually meaningful to other people!GullLars - Tuesday, May 15, 2012 - link

This may not be relevant, but i think the next generation APU with GCN graphics architecture is where we will really start to see a benefit of using GPGPU on mainstream integrated GPUs.Various GPGPU benchmarks show a signifficant increase going from VLIW4 to GCN.

I hope to get into openCL, GPGPU and parallel programming too at a later point. Currently i'm styding a BS in Computer Engineering; Embedded Systems. I love it, as it gives insight into both software and hardware at all levles from logic gates to complete systems.

Brutalizer - Tuesday, July 10, 2012 - link

WOW! Does the Trinity only have two cores? That is brutal!AMD's version of hyperthreading is a piledriver core: it has duplicated several components which is much better than Intel hyperthreading. So one piledriver core, corresponds to one Intel core with hyper threading.

So, what Anandtech is actually testing, is four Intel core cpus, vs two AMD core cpus. There is no surprise that four cores beats two cores, but the cool thing is that AMD two cores does very well compared to four Intel cores!

If I was AMD, I would say that the Trinity only has two cores, and still it gives a four core Intel cpu a match! That is much better marketing than claiming Trinity is four cores (which is not) and get beaten by Intel four core cpus.

moozoo - Tuesday, May 15, 2012 - link

Thanks!I too was after the CLInfo for trinity to find out its fp64 support.

MySchizoBuddy - Tuesday, May 15, 2012 - link

so it only supports OpenCL 1.1 not the newer 1.2