G.Skill Phoenix Blade (480GB) PCIe SSD Review

by Kristian Vättö on December 12, 2014 9:02 AM ESTAnandTech Storage Bench 2011

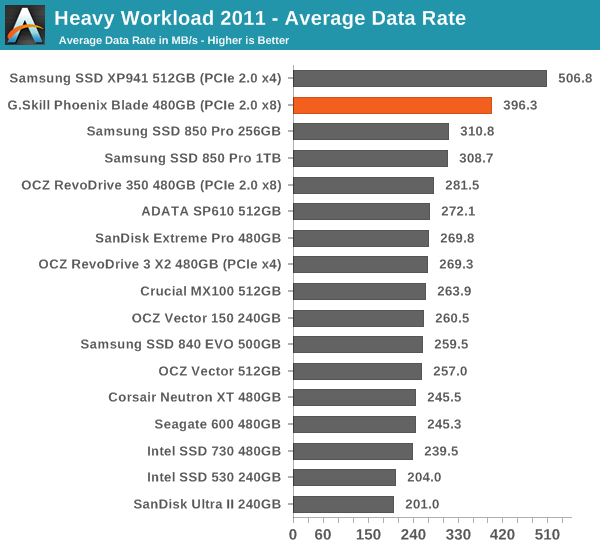

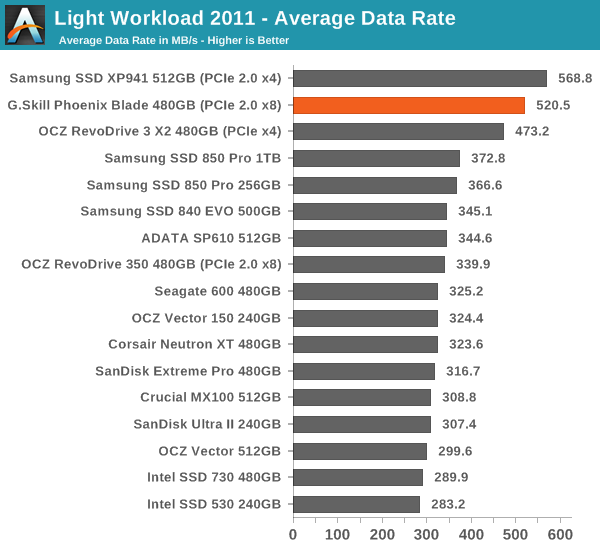

Back in 2011 (which seems like so long ago now!), we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. The MOASB, officially called AnandTech Storage Bench 2011 – Heavy Workload, mainly focuses on peak IO performance and basic garbage collection routines. There is a lot of downloading and application installing that happens during the course of this test. Our thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives. The full description of the Heavy test can be found here, while the Light workload details are here.

The Phoenix Blade continues to be strong in our 2011 Storage Benches, although the XP941 retrieves its crown as the fastest client drive. I'm guessing the XP941 is more optimized for typical client workloads, which the 2011 suites present, whereas the 2013 workload is much, much heavier and only applies to users with very heavy IO workload.

62 Comments

View All Comments

Duncan Macdonald - Friday, December 12, 2014 - link

How does this compare to 4 240GB Sandforce SSDs in software RAID 0 using the Intel chipset SATA interfaces?Kristian Vättö - Friday, December 12, 2014 - link

Intel chipset RAID tends not to scale that well with more than two drives. I have to admit that I haven't tested four drives (or any RAID 0 in a while) to fully determine the performance gains, but it's safe to say that the Phoenix Blade is better than any Intel RAID solution since it's more optimized (specific hardware and custom firmware).nathanddrews - Friday, December 12, 2014 - link

Sounds like you just set yourself up for a Capsule Review.Havor - Saturday, December 13, 2014 - link

I dont get the high praise of this drive, sure it has value for people that need high sequential speed, or people that use it to host a database on a budget that have tons of request, and can utilize high QD, all other are better off with a SATA SSD that preforms much better with a QD of 2 or less.As desktop users almost never go over QD2 in real word use, so they would be much better of with a 8x0 EVO or so, both performance wise as price wise.

I am actually wane of the few that could use the drive, if i had space for it (running quad SLI), as i use a RAMdrive, and copy programs and games that are stored on the SSD in a RAR file, true a script from a R0 set of SSDs to the RAMdisk, so high sequential speed is king for me.

But i count my self in the 0.1% of nerds, that dose things like that because i like doing stuff like that, any other sane person would just use a SSD to run its programs of.

Integr8d - Sunday, December 14, 2014 - link

The typical self-centered response: "This product doesn't apply to me. So I don't understand why anyone else likes it or why it should be reviewed," followed by, "Not that my system specs have ANYTHING to do with this, but here they are... 16 video cards, raid-0 with 16 ssd's, 64TB ram, blah blah blah..." They literally just look for an excuse to brag...It's like someone typing a response to a review of Crest toothpaste. "I don't really know anything about that toothpaste. But I saw some, the other day, when I went to the store in my 2014 Dodge Charger quad-Hemi supercharged with Borla exhaust, 20" BBS with racing slicks, HID headlights, custom sound system, swimming pool in the trunk and with wings on the side so I can fly around.

It's comical.

dennphill - Monday, December 15, 2014 - link

Thanks, Integ8d, you put a smile on my face this morning! My feelings exactly.pandemonium - Tuesday, December 16, 2014 - link

Hah. Nicely done, Integr8d.alacard - Friday, December 12, 2014 - link

The DMI interface between the chipset and the processor maxes out at about 1800~1850MB/s and this bandwidth has to be split between all the devices connected to the PCH which also incorporates an 8x pci 2.0 link. Simply put, there's not enough bandwidth to go around with more than two drives attached to the chipset in raid, not to mention that the scaling beyond 2 drives is fairly bad in general through the PCH even when nothing else is going on. And to top it all off 4k performance is usually slightly slower in Raid than a on a single SSD (ie it doesn't scale at all).I know Tomshardware had an article or two on this subject if you want to google it.

personne - Friday, December 12, 2014 - link

It takes three SSDs to saturate DMI. And 4k writes are nearly double on long queue depths. So you get more capacity, higher cost, and much of the performance benefit for many operations. Certainly tons more than a single SSD at a linear cost. If you research your statements.alacard - Friday, December 12, 2014 - link

To your first point about saturating DMI, we're in agreement. Reread what i said.To your second point about 4k, you are correct but i've personally had three separate sets of RAID 0 on my performance machine (2 vertex 3s, 2 vertex 4s, 2 vectors), and i can tell you that those higher 4k results were not impactful in any way when compared to a single SSD. (Programs didn't load faster for instance.)

http://www.tomshardware.com/reviews/ssd-raid-bench...

That leaves me curious as to what you're doing that allows you to get the benefits of high queue depth RAID0? What's your setup, what programs do you run? I ask because for me it turned out not to be worth the bother, and this is coming from someone who badly wanted it to be. In the end the higher low queue depth 4k of 1 SSD was a better option for me so i switched back.

http://www.hardwaresecrets.com/article/Some-though...