The Intel Core i7-12700K and Core i5-12600K Review: High Performance For the Mid-Range

by Gavin Bonshor on March 29, 2022 8:00 AM ESTCPU Benchmark Performance: Simulation And Rendering

Simulation and Science have a lot of overlap in the benchmarking world, however for this distinction we’re separating into two segments mostly based on the utility of the resulting data. The benchmarks that fall under Science have a distinct use for the data they output – in our Simulation section, these act more like synthetics but at some level are still trying to simulate a given environment.

We are using DDR5 memory at the following settings:

- DDR5-4800(B) CL40

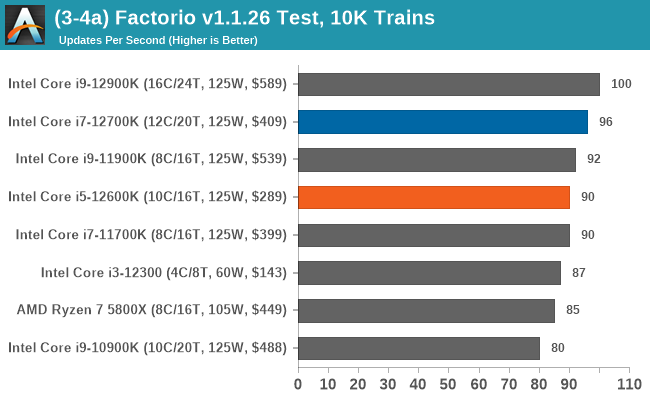

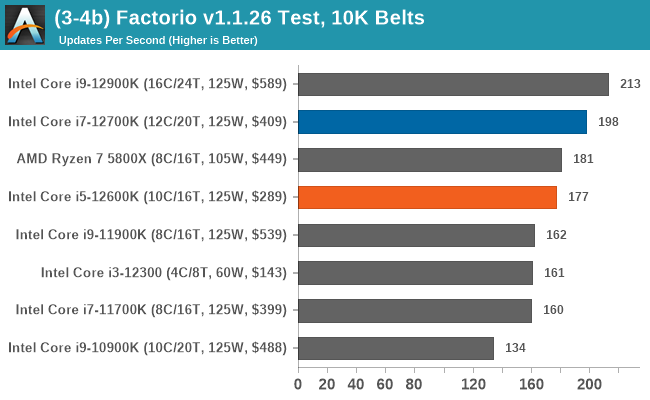

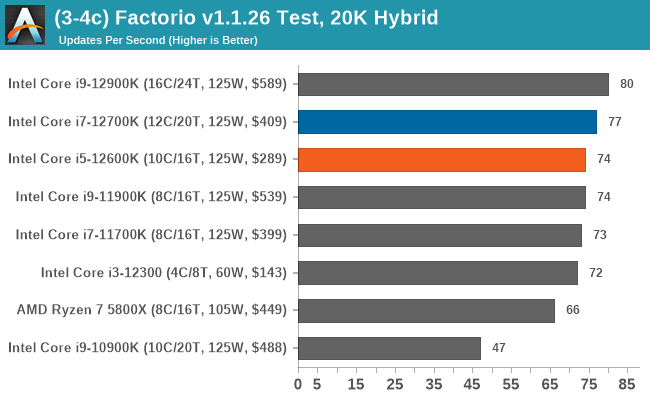

Simulation

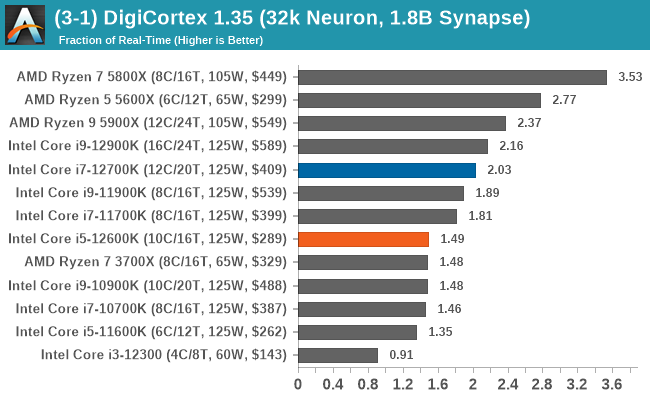

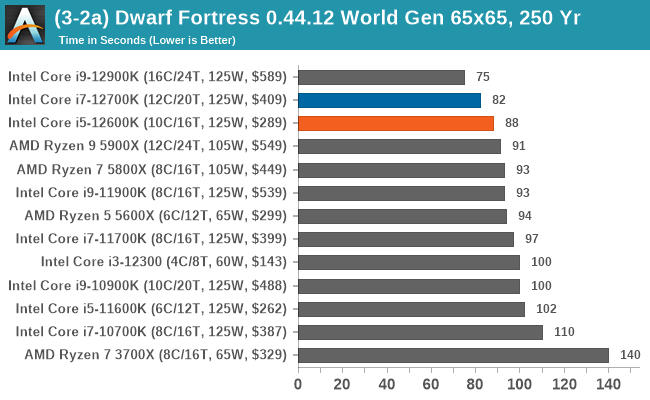

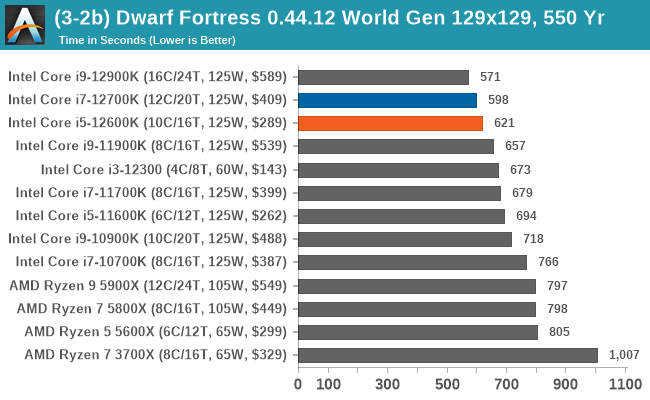

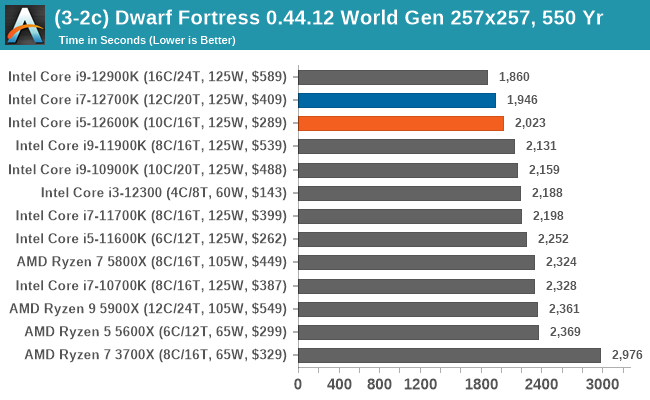

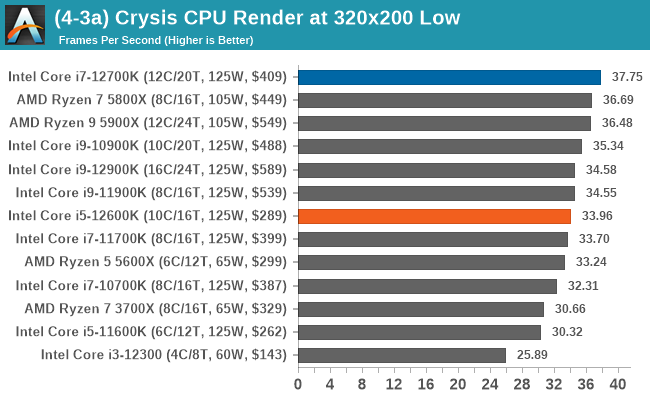

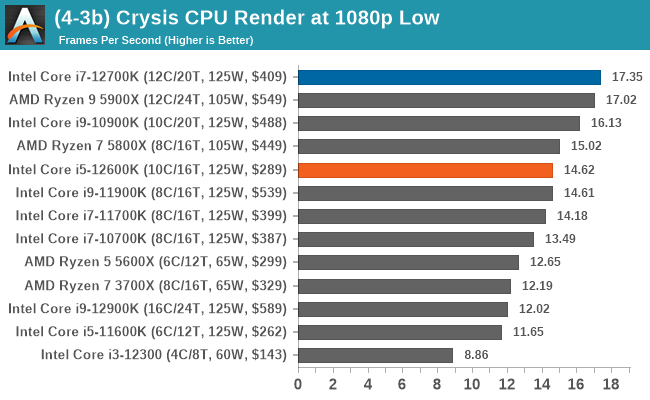

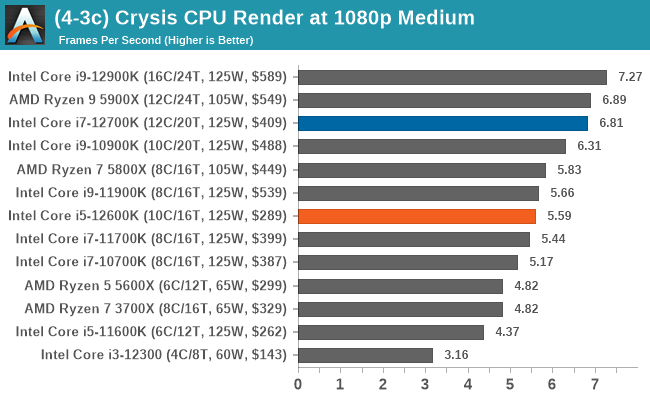

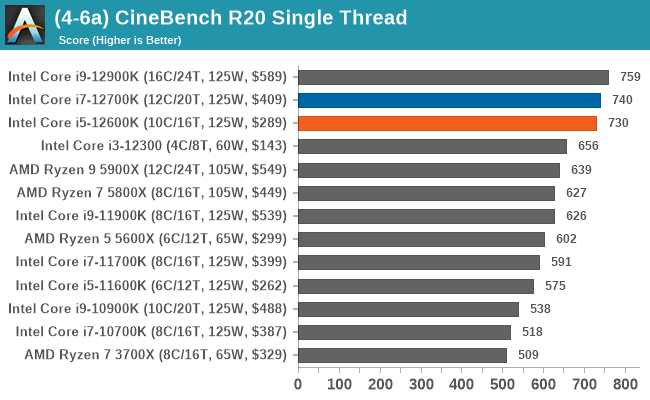

When it comes to simulation, the combination of high core frequency and better IPC performance gives Intel's 12th Gen Core series the advantage here in most situations.

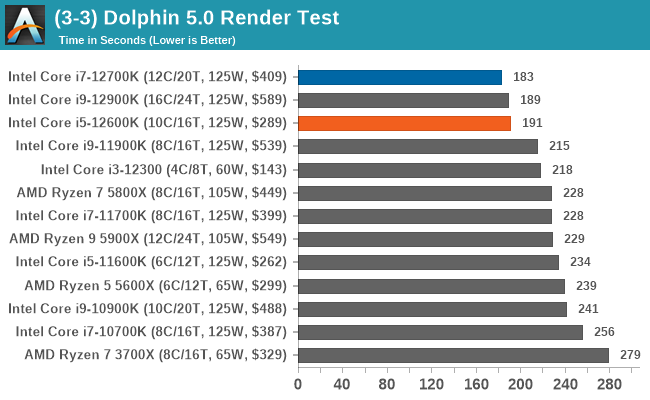

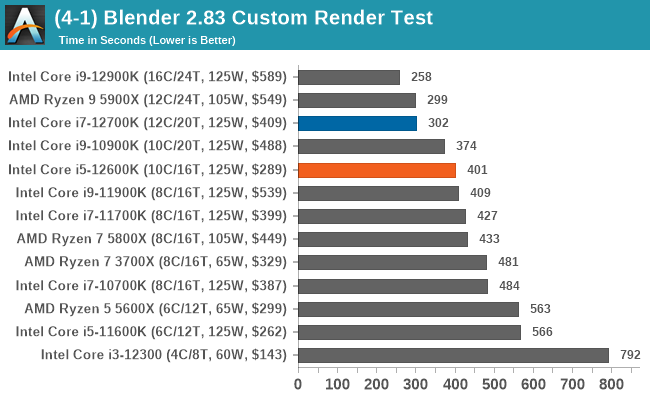

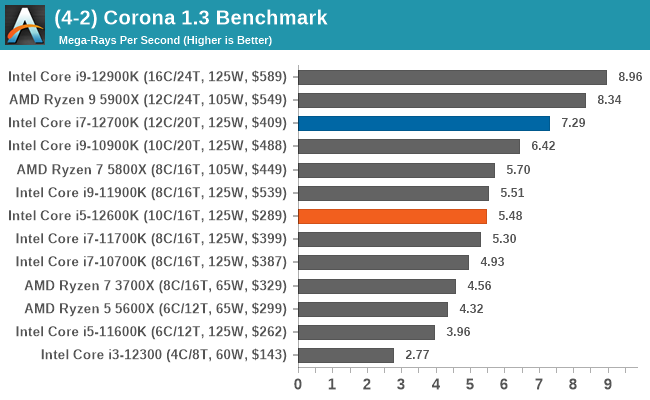

Rendering

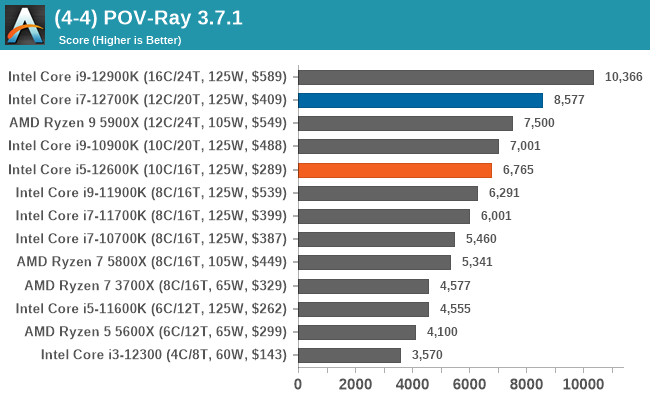

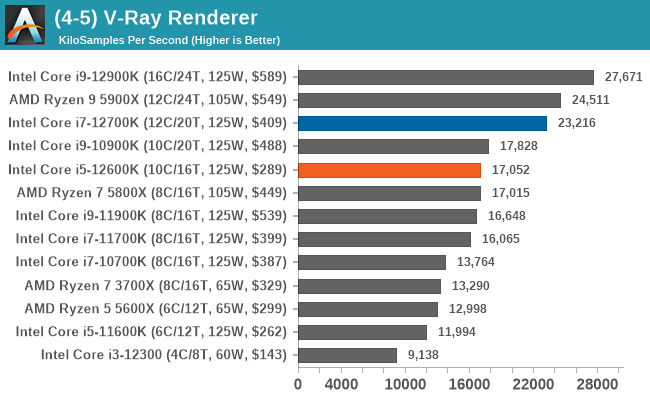

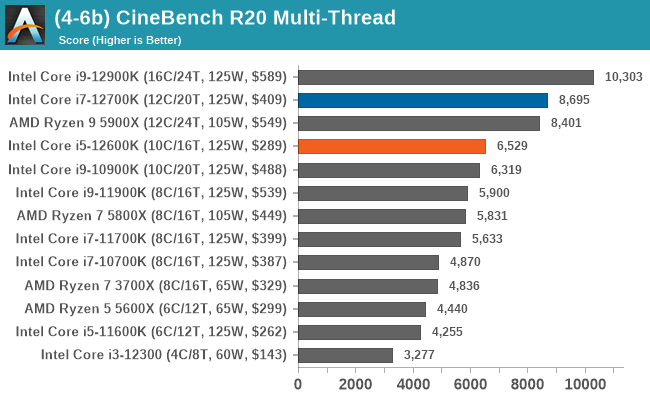

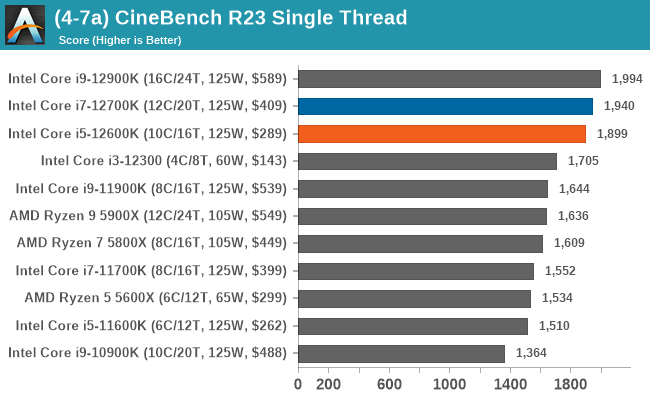

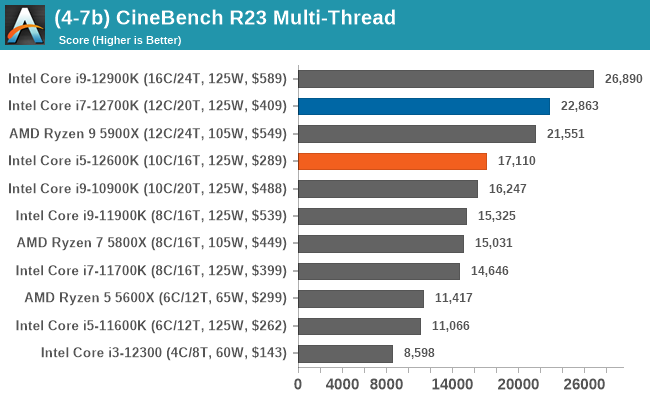

Looking at performance in the rendering section of our test suite, both the Core i7 and Core i5 performed creditably. The biggest factor to consider here is a higher core and thread count plus IPC performance will equal more rendering power.

196 Comments

View All Comments

Gondalf - Tuesday, March 29, 2022 - link

Beh! in short words Intel have the best 7nm (or 7nm equivalent) desktop cpu, this is pretty evident.Same applies to Mobile parts obviously. AMD approach look like rudimentary and silicon hungry versus Intel big-little silicon.

We'll see on finer nodes next year or so. Still i have the idea that Intel will never be anymore behind AMD like in past years.

eek2121 - Tuesday, March 29, 2022 - link

Keep in mind that Zen 4 is coming later this year and will make Alder Lake 2nd again. The flip/flop continues.From a technology standpoint AMD has the best setup. They can just add chiplets or cores to increase multicore performance.

They have 90-95% of the performance for less than half, or in some cases, 1/3rd the power.

Stop trolling. It makes you look immature and childish.

DannyH246 - Tuesday, March 29, 2022 - link

Yup. Completely agree, Intel are finally competitive with what? A 2yr old AMD product, but at triple the power. Yet some of the posters on here are acting like Intel are saviors who have finally returned to save us all from our PC ills. They forget that Intel literally screwed us all for a decade with high prices, feature lock outs, core counts limited to 4, new motherboards required every year, anti competitive behavior, and anti consumer practices. Why anyone would want Intel to return to the dominant position that they used to occupy is beyond me.Khanan - Tuesday, March 29, 2022 - link

Fully agree.lmcd - Tuesday, March 29, 2022 - link

Pretty delusional here -- AMD's whole plan with Bulldozer was to create vendor lock-in where you used an AMD GPU to accelerate floating point operations on an AMD CPU. Consumer-friendly to claim 4 cores are really 8? Thuban outperformed Piledriver until its instruction set finally was too limited years later. AMD just wanted a marketing point.Also, how are you playing both narratives at once? Intel both wasn't willing to deliver more than 4 cores and has too high of power consumption in its parts with more than 4 cores? Which is it? Because it quite literally cannot be both.

Samus - Wednesday, March 30, 2022 - link

OMG people are still going on about the shared FPU BS.It HAD to be that way. The entire modular architecture was built around sharing L2 and FPU's. There was nothing inherently wrong with this approach, except nobody optimized for it because it had never been done, so people like you freaked out. If you wanted your precious FPU's for each integer unit, buy something else...

Otritus - Wednesday, March 30, 2022 - link

It did NOT have to be that way. AMD intentionally chose to share the fpu and cache creating a cpu that was abysmal in performance and efficiency, and deceptive in marketing. Thuban was a better design, so updating the instruction set would have made a better product than bulldozer.I along with most people chose to buy something else (Sandybridge to Sky Lake). The lack of a competitive offering from AMD created the pseudo monopoly from Intel, and many people rightfully complained about the lack of innovation from Intel.

Also there is no optimizing for hardware that isn’t physically present. 4 fpus means 4 cpu cores when doing floating point tasks, which is highly consequential for gaming and web browsing.

There is no arguing for AMD’s construction equipment family. It sucked, just like Intel’s monopolistic dominance and Rocket Lake.

AshlayW - Wednesday, March 30, 2022 - link

FYI, most instructions in day to day workloads are integer, not floating point. Your argument that Floating point is "highly consequential" for gaming and web browsing is false.mode_13h - Thursday, March 31, 2022 - link

> most instructions in day to day workloads are integer, not floating point.Sure. Compiling code, web browsers, most javascript, typical database queries... agreed.

> Your argument that Floating point is "highly consequential" for gaming

LOL, wut? Gaming is *so* dominated by floating point that GPUs have long relegated integer arithmetic performance to little more than their fp64 throughput!

Geometry, physics, AI, ...all floating point. Even a lot of sound processing has transitioned over to floating-point! I'm struggling to think of much heavy-lifting typical games do that's *not* floating point! I mean, games that are CPU-bound in the first place - not like retro games, where the CPU is basically a non-issue.

Dolda2000 - Saturday, April 2, 2022 - link

While games certainly do use floating-point to an extent where it matters, as a (small-time) gamedev myself, I'd certainly argue that games are primarily integer-dominated. The parts that don't run on the GPU are more concerned with managing discrete states, calculating branch conditions, managing GPU memory resources, allocating and initializing objects, just managing general data structures, and so on and so forth.Again, I'm not denying that there are parts of games that are more FP-heavy, but looking at my own decompiled code, even in things like collision checking and whatnot, even in the FP-heavy leaf functions, the FP instructions are intermingled with a comparable amount of integer instructions just for, you know, load/store of FP data, address generation, array indexing, looping, &c. And the more you zoom out of those "math-heavy" leaf functions, the lesser the FP mix. FP performance definitely matters, but it's hardly all that matters, and I'd be highly surprised if the integer side isn't the critical path most of the time.