The GIGABYTE MZ72-HB0 (Rev 3.0) Motherboard Review: Dual Socket 3rd Gen EPYC

by Gavin Bonshor on August 2, 2021 9:30 AM EST- Posted in

- Motherboards

- AMD

- Gigabyte

- GIGABYTE Server

- Milan

- EPYC 7003

- MZ720-HB0

Visual Inspection

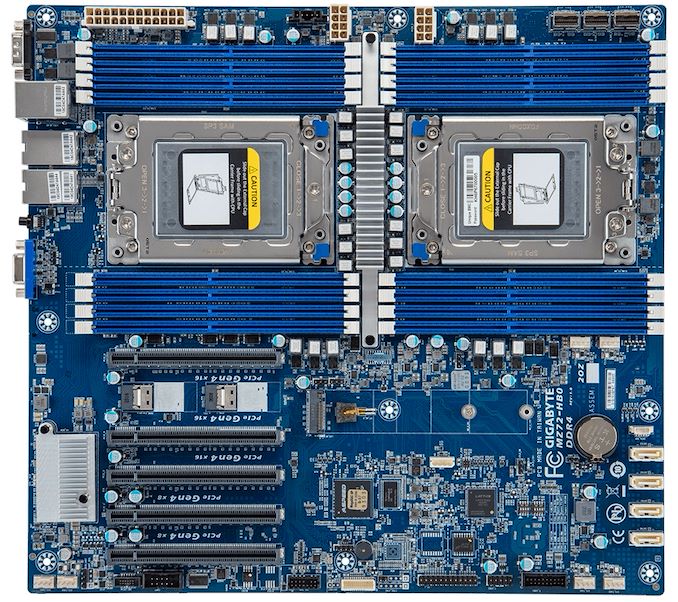

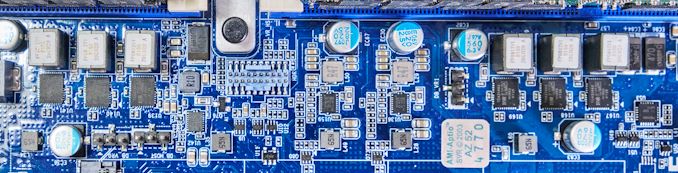

From a design standpoint, the MZ72-HB0 has a typical blue GIGABYTE Server PCB, with blue memory slots, creamy white connectors, and black PCIe slots with metal slot reinforcement. Even though this is a board designed for use in a server chassis, it has an E-ATX sized frame and could be mounted into a regular chassis if a user wishes to do so. The PCB itself is littered with connectivity, including various headers, power connectors, and controllers, with practically all of the PCB playing a vital role in the board's operation. Due to the board's function over style ethos, there are no flashy RGB LEDs or such here.

Dominating the top half of the board are two SP3 sockets with support for AMD's 3rd generation EPYC Milan processors. Each SP3 socket can accommodate up to 280 W processors per socket, including the top SKU, the AMD EPYC 7763 ($7890). Due to it being a dual-socket motherboard, it doesn't support the P-series EPYC processors which are designed for single-socket motherboards only. It's also worth noting that there are two revisions of this model, the Rev 1.x which supports just EPYC 7001 (Naples) and 7002 (Rome) processors, and the one we have on test, the Rev 3.0 which supports the EPYC 7002 (Rome) and latest EPYC 7003 (Milan) processors.

Flanking each of the SP3 sockets is are eight memory slots, with sixteen memory slots in total with two EPYC processors installed. The MZ72-HB0 supports various types of memory, including RDIMM and LDRIMM up to 128 GB per module, with support for 3DS LRDIMM/RDIMM memory of up to 256 GB per module. This means users can install a maximum of 2 TB of system memory, with memory operating in eight-channel with all of the slots populated. GIGABYTE includes support for up to DDR4-3200.

Focusing on the lower portion of the board, GIGABYTE includes five full-length PCIe 4.0 slots, with the top three operating at PCIe 4.0 x16, and the bottom two operating at PCIe 4.0 x8. Given that this is a dual-socket motherboard, each of the CPUs control different slots in the following configuration:

- Slot_6 - PCIe 4.0 x16 (CPU 0) - Top slot

- Slot_4 - PCIe 4.0 x16 (CPU 0)

- Slot_3 - PCIe 4.0 x16 (CPU 1)

- Slot_2 - PCIe 4.0 x8 (CPU 0)

- Slot_1 - PCIe 4.0 x8 (CPI 1) - Bottom slot

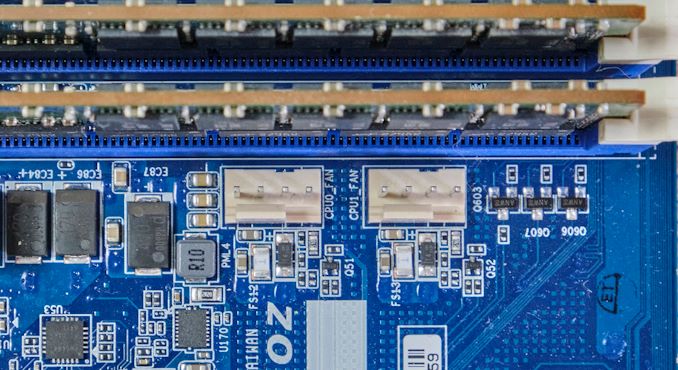

For cooling, GIGABYTE includes a total of six 4-pin headers, with two for CPU fans, and four for chassis fans. The two CPU fans are located by the right-hand CPU socket, whereas the rest can be found along the bottom of the PCB.

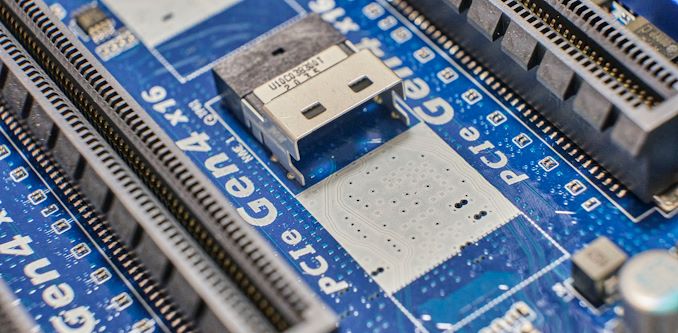

Sandwiched in-between the top two full-length PCIe slots (Slot_6/Slot_4), are two PCIe 4.0 x4 SlimSAS headers, This allows for more NVMe based storage options to be used, which is commonplace in the data center.

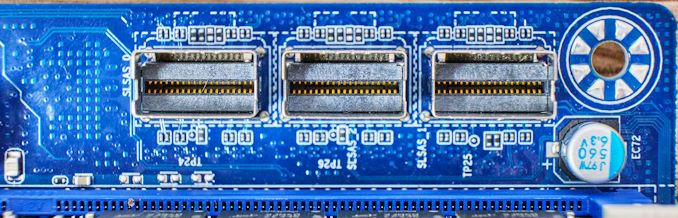

Other storage options on the GIGABYTE MZ72-HB0 include three SlimSAS ports, with each port allowing for four SATA ports (twelve in total), or users can use each slot for additional NVMe capable PCIe 4.0 x4 storage. For users looking to install single drives, GIGABYTE includes four 7-pin SATA slots.

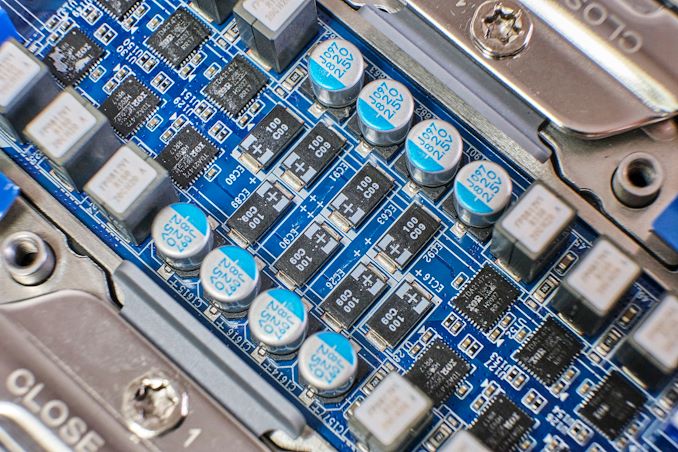

The power delivery on the MZ72-HB0 is quite intricate and complicated, which is often the case on monstrous dual-socket motherboards. Starting with the CPU VCore, each socket has its own independent power delivery with six Infineon TDA21472 power stages and is driven by an International Rectifier IR35201 PWM controller. This means that each SP3 socket has a 6+0 phase power delivery.

Looking at the memory section of the power delivery, GIGABYTE is using six Infineon TDA21462 60 A power stages in two groups of three for each set of eight memory slots. It is controlled using an International Rectifier IR3584 PWM controller, with two sets of these operating independently. For the VSoC section, GIGABYTE is using two Infineon TDA21462 60 A power stages and is controlled by a single International Rectifier IR35204 PWM controller. Again, there are two sets of these with one for each installed processor.

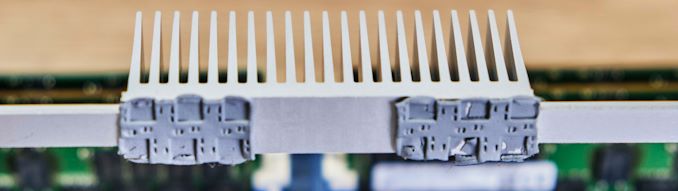

Keeping the CPU section of the power delivery cool is an aluminum heatsink with a long strip which is held into place with two black plastic clasps with springs. The main bulk of the cooling properties which sit directly over the power stages is a large finned section, which is designed to use airflow to dissipate the heat effectively - anyone using this board has to make sure there is lots of airflow, as if the board is in a server in a datacenter. The memory and VSoC areas of the power delivery don't include heatsinks, and instead, rely heavily on the airflow.

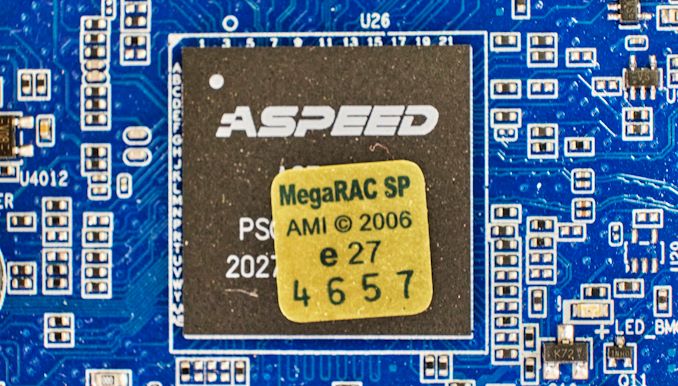

GIGABYTE's baseboard management controller (BMC) of choice is the ASPEED AST2600. This allows board management functionality including both over JAVA and HTML5 formats, as well as LDAP/AD/RADIUS support, and access to GIGABYTE's AMI MegaRAC SP-X browser-based interface.

On the rear panel is a basic, yet functional set of input and output. In terms of USB, there are just two USB 3.0 Type-A ports, with front panel headers offering for more expansion; one USB 3.1 G1 Type-A header offering two Type-A ports, and one USB 2.0 header, also offering two additional Type-A ports. Networking is premium, with one Realtek RTS5411E Gigabit management port offering access to the BMC, with two 10 GbE BASE-T ports powered by a Broadcom BCM57416 Ethernet controller. Also on the rear panel is a D-Sub, which allows users to connect to the board's ASPEED BMC controller, a serial COM port, and an ID button with a built-in LED.

28 Comments

View All Comments

tygrus - Monday, August 2, 2021 - link

There are not many apps/tasks that make good use of more than the 64c/128t. Some of those tasks are better suited for GPU, accelerators or a cluster of networked systems. Some tasks just love having the TB's RAM while others will be limited by data IO (storage drives, network). YMMV. Have fun with testing it but it will be interesting to find people with real use cases that can afford this.questionlp - Monday, August 2, 2021 - link

Being capable of handling more than 64c/128t across two sockets doesn't mean that everyone will drop more than that on this board. You can install two higher clock 32c/64t processors into each socket, have shed load of RAM and I/O for in-memory databases, software-defined (insert service here) or virtualization (or a combination of those).Installer lower core count, even higher clock speed CPUs and you have yourself an immensely capable platform for per-core licensed enterprise database solutions.

niva - Wednesday, August 4, 2021 - link

You can but why would you when you can get a system where you can slot a single CPU with 64C?This is a board for the cases where 64C is clearly not enough, and really catering towards server use, for cases where less cores but more power per core are needed, there are simply better options.

questionlp - Wednesday, August 4, 2021 - link

The fastest 64c/128t Epyc CPU right now as a base clock of 2.45 GHz (7763) while you can get 2.8 GHz with a 32c/128t 7543. Slap two of those on this board, you'll get a lot more CPU power than a single 64c/128t and double the number of memory channels.Another consideration is licensing. IIRC, VMware per-CPU licensing maxes out at 32c per socket. To cover a single 64c Epyc, you would end up with the same license count as two 32c Epyc configuration. Some customers were grandfathered in back in 2020; but, that's no longer the case for new licenses. Again, you can scale better with 2 CPU configuration than 1 CPU.

It all depends on the targeted workload. What may work for enterprise virtualization won't work for VPC providers, etc.

linuxgeex - Monday, August 2, 2021 - link

The primary use case is in-memory databases and/or high-volume low-latency transaction services. The secondary use case is rack unit aggregation, which is usually accomplished with virtualisation. ie you can fit 3x as many 80-thread high performance VPS into this as you can into any comparably priced Intel 2U rack slot, so this has huge value in a datacenter for anyone selling such a VPS in volume.logoffon - Monday, August 2, 2021 - link

Was there a revision 2.0 of this board?Googer - Tuesday, August 3, 2021 - link

There is a revision 3.0 of this board.MirrorMax - Friday, August 27, 2021 - link

No and more importantly this is exactly the same board as rev1 but with a Rome/Milan bios, so you can bios update rev1 boards to rev3 basically, odd that the review doesn't touch on thisBikeDude - Monday, August 2, 2021 - link

Task Manager screenshot reminded me of Norton Speed Disk; We now have more CPUs than we had disk clusters back in the day. :PWaltC - Monday, August 2, 2021 - link

In one place you say it took 2.5 minutes to post, in another place you say it took 2.5 minutes to cold boot into Win10 pro. I noticed you used a Sata 3 connector for your boot drive, apparently, and I was reminded of booting Win7 from a Sata3 7200rpm platter drive taking me 90-120 seconds to cold boot--in Win7 the more crowded your system with 3rd-party apps and games the longer it took to boot...;) (That's not the case with Win10/11, I'm glad to say, as with TB's of installed programs I still cold boot in ~12 secs from an NVMe OS partition.) Basically, servers are not expected to do much in the way of cold booting as up time is what most customers are interested in...but I doubt the S3 drive had much to do with the 2.5 minute cold-boot time, though. An NVMe drive might have shaved a few seconds off the cold-boot, but that's about it, imo.Interesting read! Enjoyed it. Yes, the server market is far and away different from the consumer markets.