The Crucial m4 (Micron C400) SSD Review

by Anand Lal Shimpi on March 31, 2011 3:16 AM ESTLast week I was in Orlando attending CTIA. While enjoying the Florida weather, two SSDs arrived at my office back in NC: Intel's SSD 320, which we just reviewed three days ago and Crucial's m4. Many of you noticed that I had snuck in m4 results in our 320 review but I saved any analysis/conclusions about the drive for its own review.

There are more drives that I've been testing that are missing their own full reviews. Corsair's Performance Series 3 has been in the lab for weeks now, as has Samsung's SSD 470. I'll be talking about both of those in greater detail in an upcoming article as well.

And for those of you asking about my thoughts on the recent OCZ related stuff that has been making the rounds, expect to see all of that addressed in our review of the final Vertex 3. OCZ missed its original March release timeframe for the Vertex 3 in order to fix some last minute bugs with a new firmware revision, so we should be seeing drives hit the market shortly.

There's a lot happening in the SSD space right now. All of the high end manufacturers have put forward their next-generation controllers. With all of the cards on the table it's clear that SandForce is the performance winner once again this round. So far nothing has been able to beat the SF-2200, although some came close—particularly if you're still using a 3Gbps SATA controller.

All isn't lost for competing drives however. While SandForce may be the unequivocal performance leader, compatibility and reliability are both unknowns. SandForce is still a very small company with limited resources. Although validation has apparently improved tremendously since the SF-1200 last year, it takes a while to develop a proven track record. As a result, some users and corporations feel more comfortable buying from non-SF based competitors—although the SF-2200 may do a lot to change some minds once it starts shipping.

The balance of price, performance and reliability is what keeps this market interesting. Do you potentially sacrifice reliability for performance? Or give up some performance for reliability? Or give up one for price? It's even tougher to decide when you take into account that all of the players involved have had major firmware bugs. Even though Intel appears to have the lowest return rate out of all of the drives it's not excluded from the reliability/compatibility debate.

Crucial's m4, Micron's C400

Micron and Intel have a joint venture, IMFT, that produces NAND Flash for both companies as well as their customers. Micron gets 51% of IMFT production for its own use and resale, while Intel gets the remaining 49%.

Micron is mostly a chip and OEM brand, Crucial is its consumer memory/storage arm. Both divisions shipped an SSD called the C300 last year. It was the first 6Gbps SATA SSD we tested and while it posted some great numbers, the drive launched to a very bumpy start.

| Crucial's m4 Lineup | ||||||||||||||

| CT064M4SSD2 | CT128M4SSD2 | CT256M4SSD2 | CT512M4SSD2 | |||||||||||

| User Capacity | 59.6GiB | 119.2GiB | 238.4GiB | 476.8GiB | ||||||||||

| Random Read Performance | 40K IOPS | 40K IOPS | 40K IOPS | 40K IOPS | ||||||||||

| Random Write Performance | 20K IOPS | 35K IOPS | 50K IOPS | 50K IOPS | ||||||||||

| Sequential Read Performance | Up to 415MB/s | Up to 415MB/s | Up to 415MB/s | Up to 415MB/s | ||||||||||

| Sequential Write Performance | Up to 95MB/s | Up to 175MB/s | Up to 260MB/s | Up to 260MB/s | ||||||||||

A few firmware revisions later and the C300 was finally looking good from a reliability perspective. Although recently I have heard reports of performance issues with the latest 006 firmware, the drive has been working well for me thus far. It just goes to show you that company size alone isn't an indication of compatibility and reliability.

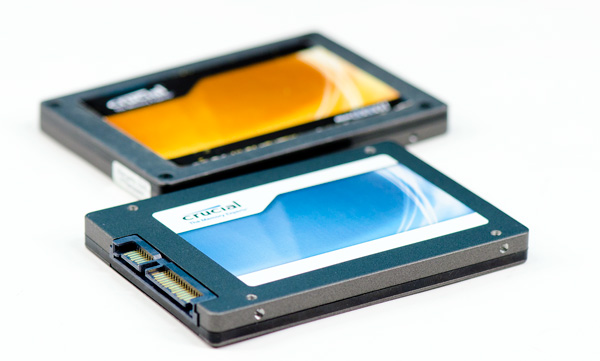

Crucial RealSSD C300 (back), Crucial m4 (front)

This time around Crucial wanted to differentiate its product from what was sold to OEMs. Drives sold by Micron will be branded C400 while consumer drives are called the m4. The two are the same, just with different names.

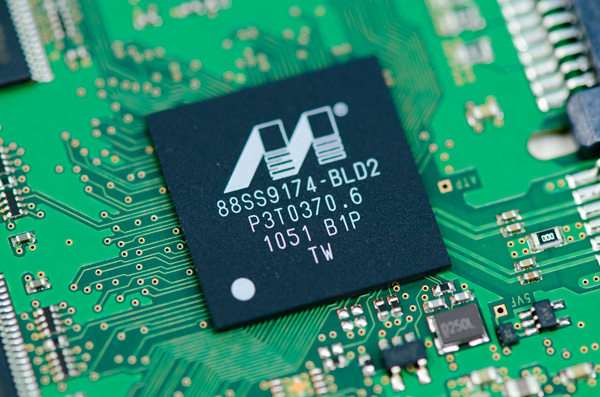

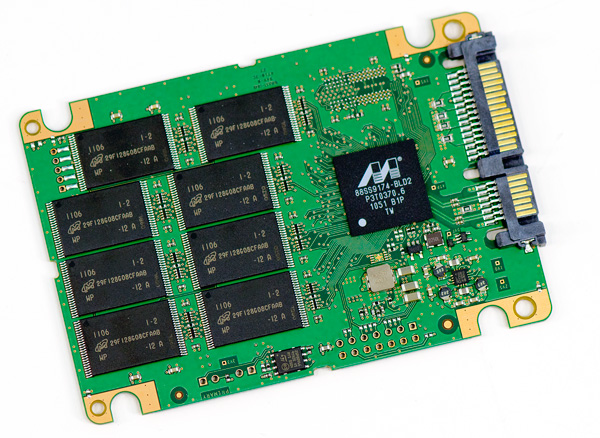

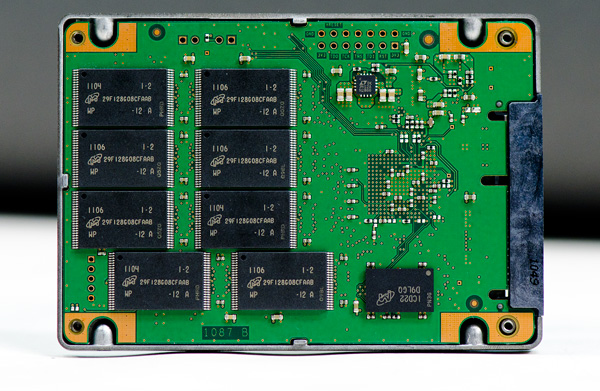

The Marvell 88SS9174-BLD2 in Crucial's m4

Under the hood, er, chassis we have virtually the same controller as the C300. The m4 uses an updated revision of the Marvell 9174 (BLD2 vs. BKK2). Crucial wouldn't go into details as to what was changed, just to say that there were no major architectural differences and it's just an evolution of the same controller used in the C300. When we get to the performance you'll see that Crucial's explanation carries weight. Performance isn't dramatically different from the C300, instead it looks like Crucial played around a bit with firmware. I do wonder if the new revision of the controller is at all less problematic than what was used in the C300. Granted fixing any old problems isn't a guarantee that new ones won't crop up either.

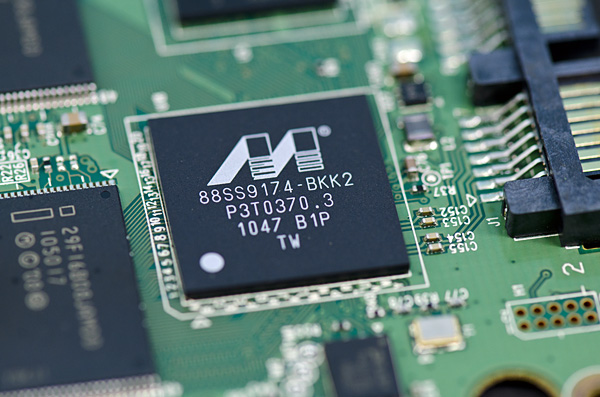

The 88SS9174-BKK2 is in the Intel SSD 510

The m4 is still an 8-channel design. Crucial believes it's important to hit capacities in multiples of 8 (64, 128, 256, 512GB). Crucial also told me that the m4's peak performance isn't limited by the number of channels branching off of the controller so the decision was easy. I am curious to understand why Intel seems to be the only manufacturer that has settled on a 10-channel configuration for its controller while everyone else picked 8-channels.

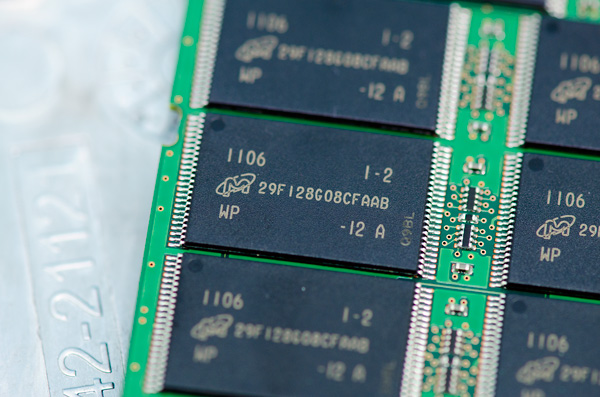

Crucial sent along a 256GB drive populated with sixteen 16GB 25nm Micron NAND devices. Micron rates its 25nm NAND at 3000 program/erase cycles. By comparison Intel's NAND, coming out of the same fab, is apparently rated at 5000 program/erase cycles. I asked Micron why there's a discrepancy and was told that the silicon's quality and reliability is fundamentally the same. It sounds like the only difference is in testing and validation methodology. In either case I've heard that most 25nm NAND can well exceed its rated program/erase cycles so it's a non-issue.

Furthermore, as we've demonstrated in the past, given a normal desktop usage model even NAND rated for only 3000 program/erase cycles will last for a very long time given a controller with good wear leveling.

Let's quickly do the math again. If you have a 100GB drive and you write 7GB per day you'll program every MLC NAND cell in the drive in just over 14 days—that's one cycle out of three thousand. Outside of SandForce controllers, most SSD controllers will have a write amplification factor greater than 1 in any workload. If we assume a constant write amplification of 20x (and perfect wear leveling) we're still talking about a useful NAND lifespan of almost 6 years. In practice, write amplification for desktop workloads is significantly lower than that.

Remember that the JEDEC spec states that once you've used up all of your rated program/erase cycles, the NAND has to keep your data safe for a year. So even in the unlikely event that you burn through all 3000 p/e cycles and let's assume for a moment that you have some uncharacteristically bad NAND that doesn't last for even one cycle beyond its rating, you should have a full year's worth of data retention left on the drive. By 2013 I'd conservatively estimate NAND to be priced at ~$0.92 per GB and in another three years beyond that you can expect high speed storage to be even cheaper. In short, combined with good ECC and an intelligent controller I wouldn't expect NAND longevity to be a concern at 25nm.

The m4 is currently scheduled for public availability on April 26 (coincidentally the same day I founded AnandTech fourteen years ago), pricing is still TBD. Back at CES Micron gave me a rough indication of pricing however I'm not sure if those prices are higher or lower than what the m4 will ship at. Owning part of a NAND fab obviously gives Micron pricing flexibility, however it also needs to maintain very high profit margins in order to keep said fab up and running (and investors happy).

The Test

| CPU |

Intel Core i7 965 running at 3.2GHz (Turbo & EIST Disabled) Intel Core i7 2600K running at 3.4GHz (Turbo & EIST Disabled)—for AT SB 2011, AS SSD & ATTO |

| Motherboard: |

Intel DX58SO (Intel X58) Intel H67 Motherboard |

| Chipset: |

Intel X58 + Marvell SATA 6Gbps PCIe Intel H67 |

| Chipset Drivers: |

Intel 9.1.1.1015 + Intel IMSM 8.9 Intel 9.1.1.1015 + Intel RST 10.2 |

| Memory: | Qimonda DDR3-1333 4 x 1GB (7-7-7-20) |

| Video Card: | eVGA GeForce GTX 285 |

| Video Drivers: | NVIDIA ForceWare 190.38 64-bit |

| Desktop Resolution: | 1920 x 1200 |

| OS: | Windows 7 x64 |

103 Comments

View All Comments

killless - Friday, April 1, 2011 - link

I frequently use VMWare at work.One of the tasks that takes forever is deleting VMWare snapshots. Or even worse one is shrinking VMWare disk foot print. In both of these cases 95% is spent on disk read/write. They take from minutes to hours. They mix random and sequential access.

More that that they are fairly repeatable...

Using Kingstone SSDNow instead of harddisk made a huge impact - it is about 3 times faster. However it still takes 2 hours sometimes to shrink disk.

I think that would be a good repeatable set of tests.

CrustyJuggler - Saturday, April 2, 2011 - link

I just bought a spanking 15" MacBook Pro which i know supports Intels new Sandybridge and the sata6gb interface. I decided to buy with just the stock HDD which i will move to the optical bay after purchasing one of these new spangly SSDsAfter reading just about every review i'm still non the wiser as to which drive would benefit me the most so I think in conclusion i will wait for the leading drives to be released. The OCZ is always favourable in the benchmarks but the horror stories of reliability coupled with dreadful customer support make me nervous.

The intel 510 seems to have great support and reliability but poor performance.

I've heard little to nothing about Corsair's ForceGT which should be somewhere in the realms Vertex 3 performance right?

I'm happy to wait at least another 8 weeks i think and just hope more reviews and testing shows up in that time.. I was using a Force F60 on the laptop i replaced and I had no problems whatsoever so i hope Corsair can have a timely release with the GT.

I had my eye on Crucial's M4 for a while now but these latest crop of reviews have left me pondering even more. Trim is not supported on Mac as of yet so Anand's take on the M4 makes alarm bells ring regarding trim

First time poster long time reader, but not very tech savvy so what do to folks?

Shark321 - Sunday, April 3, 2011 - link

Anand is #1 at SSD reviews, but there still a long way to achieve perfection.On many workstations in my company we have a daily SSD usage of at least 20 GB, and this is not something really exceptional.

One hibernation in the evening writes 8 GB (the amount of RAM) to the SSDs. And no, Windows does not write only the used RAM, but the whole 8 GB. One of the features of Windows 8 will be that Windows does not write the whole RAM content when hibernating anymore. Windows 7 disables hibernation by default on system with >4GB of RAM for that very reason!

Several of the workstation use RAM-Disks, which write a 2 or 3 GB Images on Shutdown/Hibernate.

Since we use VMWare heavily, 1-2 GB is written contanstly all over the day as Spanshots. Add some backup spanshops of Visual Studio products to that and you have another 2 GB.

Writing 20 GB a day, is nothing unusual, and this happens on at least 30 workstations. Some may even go to 30-40 GB.

From all the SSDs we have used over the years, there was about 10% failure rate within 2 years, so daily backups are really necessary. When doing a backup of a SSD to a HDD with True Image, the performance of the individual SSDs differs a lot.

So future benchmarks for professional users should take into consideration:

- a daily usage of at least 20 GB (better 40 GB)

- benchmarking VMWare creating snapshots and shringing images

- unpacking of large ISO images

- hibernate peformance

- backup performance

Thanks!

789427 - Monday, April 4, 2011 - link

Anand, can't you design a power measurement benchmark whereby typical disc activity is replicated.e.g. Power consumption while video encoding (the time taken shouldn't vary by more than 10% - you could add in the difference in idle power consumption as well!

Or... copying 100 different 1 Mb sections at 1 sec intervals of a 20 min media file - Mp3, divx mkv

My point is that Sure, SSD's make your system use more power in most benchmarks because the system is asked to do more. Ask the system to do the same thing over the same period and measure power consumption.

cb

gunslinger690 - Tuesday, April 5, 2011 - link

Ok all I really care about on the machine I'm considering using this on is gaming and web surfing. That's all I care about. Is this a good drive for this sort of app.VJ - Thursday, April 7, 2011 - link

"I've already established that 3000 cycles is more than enough for a desktop workload with a reasonably smart controller."You haven't "established" much unless you take the free space into consideration. Many desktop users will not have 100GB free space on their drives and with 20GB free space and a write amplification of 10x, the lifetime of a drive may drop to a couple of years.

A 3D plot of the expected lifetime as a function of available free space (10GB-100GB) and write amplification (1x-20x) would make your argument more sound and complete.

Hrel - Saturday, April 16, 2011 - link

I'd really like to know if using a SSD actually has any real world impact. I mean, benchmarks are great. But come on, it's not gonna make me browse the internet any fast or get me higher FPS in Mass Effect; so what's the point? Really, the question comes down to why should I go out and buy two 2TB hard drives, RAID them then ALSO buy an SSD to install the OS on? So windows boots in 7 seconds instead of 27? Cause quite frankly if it's gonna cost me an extra 120-240 dollars I'll just wait 20 seconds. I almost never boot my machine from scratch anyway.Ideally I'd want to use a machine with an SSD in it before buying one. But since that doesn't seem likely I'd love it if you guys could just point a camera at two machines right next to eachother, boot them up, wait for both to finish loading the OS. Then use them. Go online, read some anandtech, boot up Word and type something, open photoshop and edit a picture then start up a game and play for a couple minutes. Cause I'm willing to bet, with the exception of load times, there will be no difference at all. And like I already said, if my game takes 20 seconds to load instead of 5, I can live with that. It's not worth anywhere near 100+ dollars.

Just to be clear one should have an SSD in it near to the 100 dollar mark, since that's as expensive of an SSD most people can even afford to consider. And the other should have two modern 7200rpm mechanical disks from WD in a striped RAID.

Hrel - Saturday, April 16, 2011 - link

both on 6GBPS on P67.Hrel - Saturday, April 16, 2011 - link

forgot to mention, the SSD machine should also have the same 2 mechanical disks in striped RAID and all the games and programs should be installed on those. Cause for 100 dollars the only thing you can put on an SSD is the OS and some small programs like the browser and various other things every PC needs to be usable; maybe photoshop too but the photos couldn't be on the SSD.Hrel - Saturday, April 16, 2011 - link

the games can't be installed on the SSD is my point.