Micron Announces RealSSD P300, SLC SSD for Enterprise

by Anand Lal Shimpi on August 12, 2010 6:00 AM EST- Posted in

- SSDs

- Storage

- Micron

- RealSSD P300

Buying an SSD for your notebook or desktop is nice. You get more consistent performance. Applications launch extremely fast. And if you choose the right SSD, you really curb the painful slowdown of your PC over time. I’ve argued that an SSD is the single best upgrade you can do for your computer, and I still believe that to be the case. However, at the end of the day, it’s a luxury item. It’s like saying that buying a Ferrari will help you accelerate quicker. That may be true, but it’s not necessary.

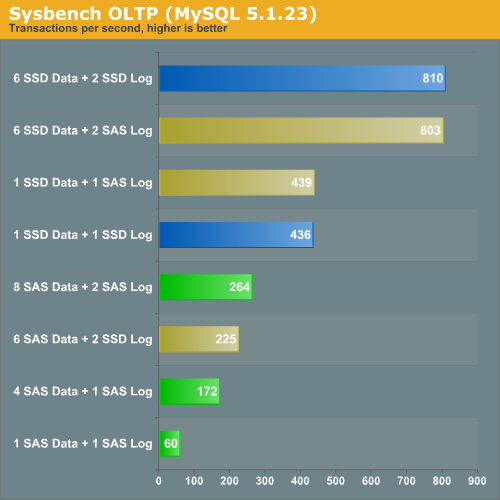

In the enterprise world however, SSDs are even more important. Our own Johan de Gelas had his first experience with an SSD in one of his enterprise workloads a year ago. This OLTP test looks at the performance difference between using 15K RPM SAS drives for a database server. Johan experimented with using SAS HDDs vs. SSDs for both data and log drives in the server.

Using a single SSD (Intel’s X25-E) for a data drive and a single SSD for a log drive is faster than running eight 15,000RPM SAS drives in RAID 10 plus another two in in RAID 0 as a logging drive.

Not only is performance higher, but total power consumption is much lower. Under full load eight SAS drives use 153W, compared to 2 - 4W for a single Intel X25-E. There are also reliability benefits. While mechanical storage requires redundancy in case of a failed disk, SSDs don’t. As long as you’ve properly matched your controller, NAND and ultimately your capacity to your workload, an SSD should fail predictably.

The overwhelming number of poorly designed SSDs on the market today is one reason most enterprise customers are unwilling to consider SSDs. The high margins available in the enterprise market is the main reason SSD makers are so eager to conquer it.

Micron’s Attempt

Just six months ago we were first introduced to Crucial’s RealSSD C300. Not only was it the first SSD we tested with a native 6Gbps SATA interface, but it was also one of the first to truly outperform Intel across the board. A few missteps later and we found the C300 to be a good contender, but our second choice behind SandForce based drives like the Corsair Force or OCZ Vertex 2.

Earlier this week Micron, Crucial’s parent company, called me up to talk about a new SSD. This drive would only ship under the Micron name as it’s aimed squarely at the enterprise market. It’s the Micron RealSSD P300.

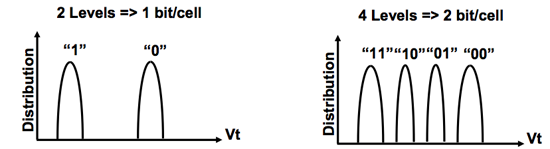

The biggest difference between the P300 and the C300 is that the former uses SLC (Single Level Cell) NAND Flash instead of MLC NAND. As you may remember from my earlier SSD articles, SLC and MLC NAND are nearly identical - they just store different amounts of data per NAND cell (1 vs. 2).

SLC (left) vs. MLC (right) NAND

The benefits of SLC are higher performance and a longer lifespan. The downside is cost. SLC NAND is at least 2x the price of MLC NAND. You take up the same die area as MLC but you get half the storage. It’s also produced in lower quantities so you get at least twice the cost.

| SLC NAND flash | MLC NAND flash | |

| Random Read | 25 µs | 50 µs |

| Erase | 2ms per block | 2ms per block |

| Programming | 250 µs | 900 µs |

Micron wouldn’t share pricing but it expects drives to be priced under $10/GB. That’s actually cheaper than Intel’s X25-E, despite being 2 - 3x more than what we pay for consumer MLC drives. Even if we’re talking $9/GB that’s a bargain for enterprise customers if you can replace a whole stack of 15K RPM HDDs with just one or two of these.

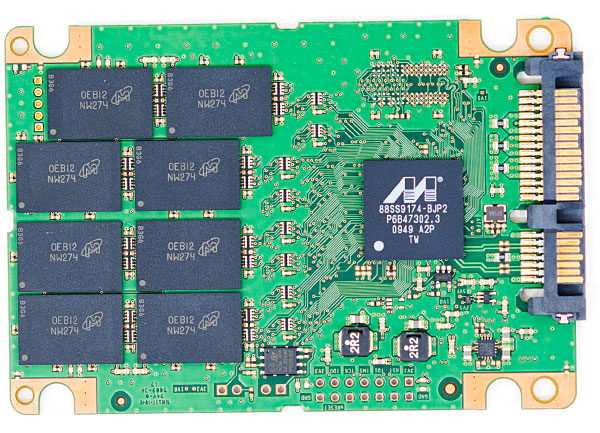

The controller in the P300 is nearly identical to what was in the C300. The main differences are two fold. First, the P300’s controller supports ECC/CRC from the controller down into the NAND. Micron was unable to go into any more specifics on what was protected via ECC vs. CRC. Secondly, in order to deal with the faster write speed of SLC NAND, the P300’s internal buffers and pathways operate at a quicker rate. Think of the P300’s controller as a slightly evolved version of what we have in the C300, with ECC/CRC and SLC NAND support.

The C300

The rest of the controller specs are identical. We still have the massive 256MB external DRAM and unchanged cache size on-die. The Marvell controller still supports 6Gbps SATA although the P300 doesn’t have SAS support.

| Micron P300 Specifications | |||||

| 50GB | 100GB | 200GB | |||

| Formatted Capacity | 46.5GB | 93.1GB | 186.3GB | ||

| NAND Capacity | 64GB SLC | 128GB SLC | 256GB SLC | ||

| Endurance (Total Bytes Written) | 1 Petabyte | 1.5 Petabytes | 3.5 Petabytes | ||

| MTBF | 2 million device hours | 2 million device hours | 2 million device hours | ||

| Power Consumption | < 3.8W | < 3.8W | < 3.8W | ||

The P300 will be available in three capacities: 50GB, 100GB and 200GB. The drives ship with 64GB, 128GB and 256GB of SLC NAND on them by default. Roughly 27% of the drive capacity is designated as spare area for wear leveling and bad block replacement. This is in line with other enterprise drives like the original 50/100/200GB SandForce drives and the Intel X25-E. Micron’s P300 datasheet seems to imply that the drive will dynamically use unpartitioned LBAs as spare area. In other words, if you need more capacity or have a heavier workload you can change the ratio of user area to spare area accordingly.

Micron shared some P300 performance data with me:

| Micron P300 Performance Specifications | ||||

| Peak | Sustained | |||

| 4KB Random Read | Up to 60K IOPS | Up to 44K IOPS | ||

| 4KB Random Write | Up to 45.2K IOPS | Up to 16K IOPS | ||

| 128KB Sequential Read | Up to 360MB/s | Up to 360MB/s | ||

| 128KB Sequential Write | Up to 275MB/s | Up to 255MB/s | ||

The data looks good, but I’m working on our Enterprise SSD test suite right now so I’ll hold off any judgment until we get a drive to test. Micron is sampling drives today and expects to begin mass production in October.

49 Comments

View All Comments

bok-bok - Tuesday, August 17, 2010 - link

Depending on the application, the whole network could be virtual and all the data passing around could be amongst the "SSPindles" - or WTF-ever they'll be called - on a DAS array. Then FTT-enduser would just deliver a streamed screen. Whole network redundancy would still only involve transmitting the delta to the network mirror(s). Obviously this is an oversimplification, but it's not like it's out of reach.If XenDesktop eats some more power pellets and Google rolls out enough experimental gigabit networks, and SSD prices get more sane (all three are somewhat safe bets), then I don't see why it couldn't be a viable model. Especially when you consider what kind of processor core density we're starting to see. There are few computing applications that would be effectively hindered by the latency inherent in light speed (plus reasonably overhead), even across a distant WAN link

I think we're all probably subconsciously freaking out at the prospect of becoming, ourselves, the bottleneck. Personally, I'm giddy at the notion of technology not evoking ambivalence from a productivity/performance/empowerment point of view. Like, what am I gonna do when I can't use the term "f@$#ing computer!" anymore?

Industry might be freaking out at, "What can we sell the end user when even the end user knows that the only performance bottleneck is the end user."

Are they just gonna virtualize US?

XEndUser?

But in all seriousness, I very much look forward to trading up to a different set of problems.

Marakai - Thursday, August 19, 2010 - link

You made my day at the thought of running myself through P2V. :-DWell, I'm going to look into scraping some fund together from my various project budgets and see if I can't build a nice little "prototype" enterprise SSD array and see how fast my team can access pr0n^H^H^H^H inner-departmental production data. ;)

bok-bok - Monday, August 30, 2010 - link

I used to just take a filing clerk, image them, and then ghostcast them to all the filing cabinets, which did save on labor.But then it hit me - I could just P2V the whole office staff and then fire /everyone/ - saved me a bundle! ;-)

Wait a minute. Hmmm...

ericgl21 - Sunday, August 15, 2010 - link

There's a company called Pliant that manufactures 2.5" & 3.5" SSDs for the enterprise, and they claim at least 120K IOPS on the 2.5" drive and 160K IOPS for the 3.5" drive. Here's a link to their website: www.plianttechnology.comThere's another well-respected company that manufactures SSDs for the enterprise called STEC, and they also claim to have SSDs with a very-high performance. their website is: www.stec-inc.com

I highly recommend it to Anand to evaluate & test SSD products from both companies, as they might put the Intel X25-E and Micron P300 to shame.

JHoov - Monday, August 30, 2010 - link

I'm not sure about Pliant, but STEC's drives aren't anywhere near $10/GB. And if my 60GB SSD gives me 60K IOPS, then that's $10/1000 IOPS. Again, the STEC might have claimed 120K+ IOPS, but you'd have to add a zero to the price tag, and then double it afterwards.This is based on 3 month old pricing for STEC SSDs for HDS/HP XP Tier 1 SAN Arrays, wherein I was told that 146GB SSDs run in the $11-14K range, EACH.

These are also Fibre Channel attached drives made to go into a SAN, vs SATA attached drives made for DAS, but if I have a requirement for IOPS in the multi-ten to hundred K range, and I can do it for 1/20th the price, I'm OK with DAS.

ArntK - Thursday, December 9, 2010 - link

I just want to second this and hope Anand will start to look closely into these types of drives.zadx - Wednesday, November 2, 2011 - link

I am in the same boat with ArntK and ericgl21 , would love to see how STEC SSDs stand against others.stepz - Monday, August 16, 2010 - link

Is the write cache super-capacitor backed? That is basically a hard requirement to use it for table storage on OLTP loads. Most consumer drives will happily lose data in the write cache when the power is cut. For databases that is completely unacceptable, losing any writes will pretty much always result in a corrupt database. And when you turn off write caching the random small write performance goes through the floor. Maybe you could test the write cache behavior with something like this: http://brad.livejournal.com/2116715.html?page=2Some other points about SSDs for database use:

* If the SSD has a super-cap, then it can be used as a cheap way to get 4 digit transaction rates for small to medium sized databases without getting a RAID card with a battery backed write cache. A RAM based cache with backup power is necessary to turn the synchronous IOPS into sequential writes.

* I doubt anyone who cares about the data will run the log as RAID-0. RAID-1 is more likely, with a BBWC even RAID-5 is acceptable.

* For random i/o limited loads (quite common for databases), this type of drive will beat spinning rust by more than a factor of 10 in IOPS per $.

bok-bok - Tuesday, August 17, 2010 - link

well said!