Micron Announces RealSSD P300, SLC SSD for Enterprise

by Anand Lal Shimpi on August 12, 2010 6:00 AM EST- Posted in

- SSDs

- Storage

- Micron

- RealSSD P300

Buying an SSD for your notebook or desktop is nice. You get more consistent performance. Applications launch extremely fast. And if you choose the right SSD, you really curb the painful slowdown of your PC over time. I’ve argued that an SSD is the single best upgrade you can do for your computer, and I still believe that to be the case. However, at the end of the day, it’s a luxury item. It’s like saying that buying a Ferrari will help you accelerate quicker. That may be true, but it’s not necessary.

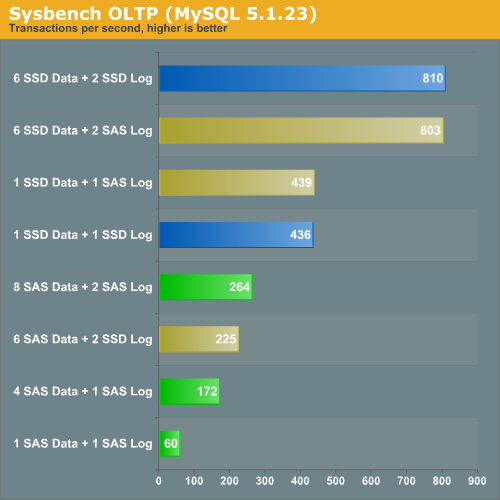

In the enterprise world however, SSDs are even more important. Our own Johan de Gelas had his first experience with an SSD in one of his enterprise workloads a year ago. This OLTP test looks at the performance difference between using 15K RPM SAS drives for a database server. Johan experimented with using SAS HDDs vs. SSDs for both data and log drives in the server.

Using a single SSD (Intel’s X25-E) for a data drive and a single SSD for a log drive is faster than running eight 15,000RPM SAS drives in RAID 10 plus another two in in RAID 0 as a logging drive.

Not only is performance higher, but total power consumption is much lower. Under full load eight SAS drives use 153W, compared to 2 - 4W for a single Intel X25-E. There are also reliability benefits. While mechanical storage requires redundancy in case of a failed disk, SSDs don’t. As long as you’ve properly matched your controller, NAND and ultimately your capacity to your workload, an SSD should fail predictably.

The overwhelming number of poorly designed SSDs on the market today is one reason most enterprise customers are unwilling to consider SSDs. The high margins available in the enterprise market is the main reason SSD makers are so eager to conquer it.

Micron’s Attempt

Just six months ago we were first introduced to Crucial’s RealSSD C300. Not only was it the first SSD we tested with a native 6Gbps SATA interface, but it was also one of the first to truly outperform Intel across the board. A few missteps later and we found the C300 to be a good contender, but our second choice behind SandForce based drives like the Corsair Force or OCZ Vertex 2.

Earlier this week Micron, Crucial’s parent company, called me up to talk about a new SSD. This drive would only ship under the Micron name as it’s aimed squarely at the enterprise market. It’s the Micron RealSSD P300.

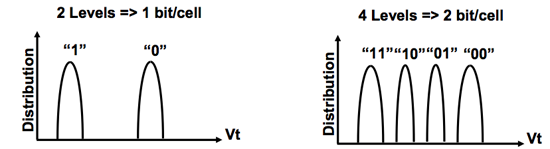

The biggest difference between the P300 and the C300 is that the former uses SLC (Single Level Cell) NAND Flash instead of MLC NAND. As you may remember from my earlier SSD articles, SLC and MLC NAND are nearly identical - they just store different amounts of data per NAND cell (1 vs. 2).

SLC (left) vs. MLC (right) NAND

The benefits of SLC are higher performance and a longer lifespan. The downside is cost. SLC NAND is at least 2x the price of MLC NAND. You take up the same die area as MLC but you get half the storage. It’s also produced in lower quantities so you get at least twice the cost.

| SLC NAND flash | MLC NAND flash | |

| Random Read | 25 µs | 50 µs |

| Erase | 2ms per block | 2ms per block |

| Programming | 250 µs | 900 µs |

Micron wouldn’t share pricing but it expects drives to be priced under $10/GB. That’s actually cheaper than Intel’s X25-E, despite being 2 - 3x more than what we pay for consumer MLC drives. Even if we’re talking $9/GB that’s a bargain for enterprise customers if you can replace a whole stack of 15K RPM HDDs with just one or two of these.

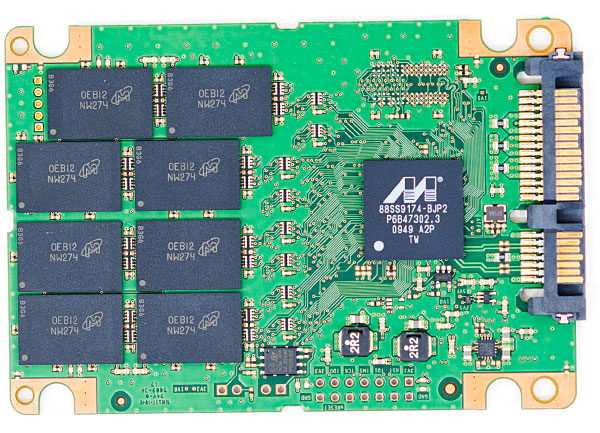

The controller in the P300 is nearly identical to what was in the C300. The main differences are two fold. First, the P300’s controller supports ECC/CRC from the controller down into the NAND. Micron was unable to go into any more specifics on what was protected via ECC vs. CRC. Secondly, in order to deal with the faster write speed of SLC NAND, the P300’s internal buffers and pathways operate at a quicker rate. Think of the P300’s controller as a slightly evolved version of what we have in the C300, with ECC/CRC and SLC NAND support.

The C300

The rest of the controller specs are identical. We still have the massive 256MB external DRAM and unchanged cache size on-die. The Marvell controller still supports 6Gbps SATA although the P300 doesn’t have SAS support.

| Micron P300 Specifications | |||||

| 50GB | 100GB | 200GB | |||

| Formatted Capacity | 46.5GB | 93.1GB | 186.3GB | ||

| NAND Capacity | 64GB SLC | 128GB SLC | 256GB SLC | ||

| Endurance (Total Bytes Written) | 1 Petabyte | 1.5 Petabytes | 3.5 Petabytes | ||

| MTBF | 2 million device hours | 2 million device hours | 2 million device hours | ||

| Power Consumption | < 3.8W | < 3.8W | < 3.8W | ||

The P300 will be available in three capacities: 50GB, 100GB and 200GB. The drives ship with 64GB, 128GB and 256GB of SLC NAND on them by default. Roughly 27% of the drive capacity is designated as spare area for wear leveling and bad block replacement. This is in line with other enterprise drives like the original 50/100/200GB SandForce drives and the Intel X25-E. Micron’s P300 datasheet seems to imply that the drive will dynamically use unpartitioned LBAs as spare area. In other words, if you need more capacity or have a heavier workload you can change the ratio of user area to spare area accordingly.

Micron shared some P300 performance data with me:

| Micron P300 Performance Specifications | ||||

| Peak | Sustained | |||

| 4KB Random Read | Up to 60K IOPS | Up to 44K IOPS | ||

| 4KB Random Write | Up to 45.2K IOPS | Up to 16K IOPS | ||

| 128KB Sequential Read | Up to 360MB/s | Up to 360MB/s | ||

| 128KB Sequential Write | Up to 275MB/s | Up to 255MB/s | ||

The data looks good, but I’m working on our Enterprise SSD test suite right now so I’ll hold off any judgment until we get a drive to test. Micron is sampling drives today and expects to begin mass production in October.

49 Comments

View All Comments

fotoguy42 - Saturday, August 14, 2010 - link

Note that all of the read speed tests will not really (at all?) be effected by whether TRIM is supported.TRIM is there to reset existing sections that have been written to, and then marked as available. Without TRIM, the SSD will have to do that at the same time it is trying to write things down, versus doing it at other times.

vol7ron - Thursday, August 12, 2010 - link

How is it, many years after the fact, that Intel is still one-of (if not "the") best SSD provider?I don't get why Intel's stock is so low, even though they've had their legal issues. Google's stock goes up/down what Intel's price is on a daily basis.

Intel did the SSD right, straight from the gate. They may have had some glitches and it may have lacked some functionality (like TRIM) or the ability to update the firmware, but their SSDs didn't stutter and they are still top performing in their class.

I am still waiting for the price to drop.

As stated before, my ideal market prices:

256GB SSD at ~$175

5TB HDD at ~$175

andersx - Friday, August 13, 2010 - link

Wait ten years, then add a zero to those capacities.bok-bok - Thursday, August 12, 2010 - link

Since the dawn of time (or maybe only the Tandy 2000), we have awaited the day when the storage subsystem would cease to be a bottleneck, ruin our marriages, and devour our children.And once I sat down at a core i5 laptop with an Intel SSD-based RAID 0 array, I felt like that moment had arrived.

I had debated about what I was going to devote upgrade money to in my own laptop, and I was ready to go for more RAM. Then I experienced the performance boost that comes with the price tag, and it stopped being just being a benchmark number on a website.

And now I feel like I was in a state of denial driven by a penny-wise mentality. I mean, we've always known it was the damn disk. Ever since I was a kid playing Hero's Quest on my dad's Tandy, and the game action would halt as the 20mb hard drive started chugging and chirping, loading the next epic encounter, my life has been affected by mechanical storage.

While I appreciate andersx's example, where the capacity needs and related price tag don't scale well, I believe there are countless business/enterprise cases where the expenditure is beyond justified.

I mean, what's your time worth?

Consider your daily business processes; to what extent are they hindered by the various friction you encounter while interfacing with technology? How do you qualify the subconscious effect of all that friction on our well being? And it does have an impact.

Now imagine an office environment where like many organizations, the staff is pushing older workstations - or even newer ones with certain damnably slow antivirus/endpoint protection applications constantly doing read operations on mechanical spindles. I like my A/V app, but I'm still in-f$%*ing-furiated when the startup scan causes my machine to bring time to a halt. Whatever possibly valuable idea I had that motivated me to open my laptop just got away from me when the wave of frustration swept over me. Multiply that by everyone involved in a business process who endures a similar phenomenon.

And of course there's the quantifiable loss of time (your most precious asset and the thing when you run out, you die).

But there's more. It's not a contest against your own price-performance curve; business is a constant competition. Now I'm not suggesting that the lack of an SSD drive is going to topple your empire. But if an organization misses a high-dollar opportunity due to an accumulated competitive disadvantage, then the "will this save me enough time to justify the cost?" question is irrelevant. The fastest horse wins. If you can afford to deploy SSD, then you almost can't afford not to.

I mean, I don't know I found the performance gain to be dramatic enough to justify outfitting every machine in the office with a 40gb SSD drive. Even the 4+ year old ones, even the D310s. I haven't tested the theory yet, but I'm guessing that the oldest computers will probably see the greatest benefit. Provided the caps on the motherboards don't crap out first, that is.

But if they do, just pull the drives out.

After all that it will be the Exchange Server's turn.

.

It's hard to qualify the psychological impact of friction in our computing environment, but consider how long we've been enduring the storage bottleneck

Iketh - Thursday, August 12, 2010 - link

BRAVO!!!!LokutusofBorg - Saturday, August 14, 2010 - link

I got my work to agree to a 120GB SSD in my dev workstation and it has proved itself. So I've been pushing really hard lately to start testing PCI-x SSD drives for our DB servers. A single FusionIO drive delivers 6 figure SAN (160 drives) performance. I've been reading lots on this lately, but this article is the one that really got me thinking:http://www.brentozar.com/archive/2010/03/fusion-io...

Gilbert Osmond - Friday, August 13, 2010 - link

Yes, this year IS the corner around which the storage market is turning. Peak-HDD (a-la Peak Oil) is just about now or has already passed.The dude over at http://www.storagesearch.com has had the mass-storage market figured out for at least 5 years. Articles he wrote 3 to 6 years ago predicted that SSDs would start to penetrate the mass market around now. His predictions for the next 5 years probably as accurate. No, I'm not a paid shill for his site; I've just read most of what's on it and it's clearly correct.

The widespread adoption of SSDs is underway; spinning HDDs are likely to be extinct within 5 to 7 years, as they themselves made floppy disks extinct in their time. SSD capacities will rise and prices per GB / per TB will only get lower. It's about time.

Necrosaro420 - Friday, August 13, 2010 - link

$600 for 256gb...ill sitck to platters.Marakai - Saturday, August 14, 2010 - link

I've now been searching for info on this for a while, but can't seem to come up with an answer:Why is the lack of TRIM not considered an issue for enterprise usage of SSDs?

If the business case outweighs the cost, I'd build an array with RAID10, possibly RAID50.

I lose TRIM. As Anand has demonstrated that leads to big performance drops over time in consumer usage. Why does this not seem to be an issue in enterprise usage - going *purely* by the lack of information on the Intertubes addressing this issue?

Marakai - Saturday, August 14, 2010 - link

Sorry for two comments back to back, but as I'm seriously looking at a pilot project using enterprise level SSDs, these two issues are on the top of my mind:The second one, besides RAID and TRIM is the question whether we are simply shifting the performance bottleneck when moving to enterprise SSDs in storage arrays.

The storage array most likely will be fibre-channel, or increasingly, trunked giga- or 10giga-Ethernet.

When will that become the bottleneck where the SSD array could deliver the data, but the pipe to the host is too slow? Are we going to go back to DAS? Will Infiniband have its day?