Supermicro's Twin: Two nodes and 16 cores in 1U

by Johan De Gelas on May 28, 2007 12:01 AM EST- Posted in

- IT Computing

Supermicro 6015T-T/6015T-INF Server

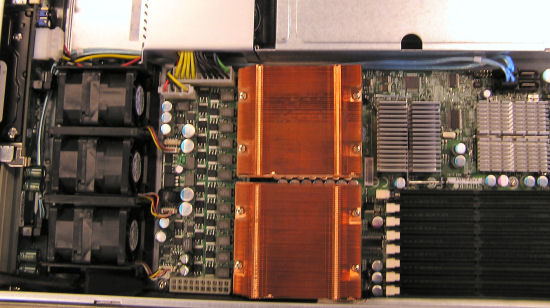

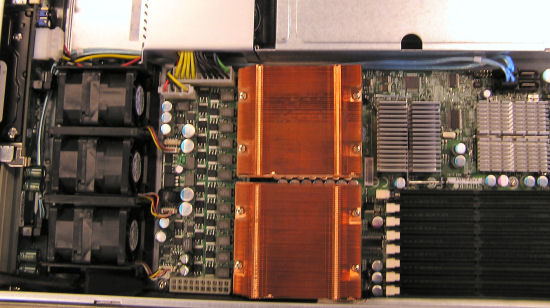

Two models exist : the 6015T-INF and the 6015T-T. The only difference is that the former has an additional InfiniBand connector. Supermicro sent us the 6015T-INF. The Superserver 6015T-INF consists of two Supermicro X7DBT-INF dual processor server boards. Each board is based on Intel's 5000P chipset and support two Intel 53xx/51xx/50xx series processors. Let's take a look at the scheme below.

The Intel 5000p chipset has two I/O units, one with two x8 PCIe lanes and a second one with an x8 + x4 PCIe lane configuration. The first PCIe x8 connection of the first I/O unit is used to attach the 20 Gbit/s InfiniBand connection to the Northbridge (MCH). PCIe x8 offers 2 GB/s full duplex, which roughly matches the peak data bandwidth of the InfiniBand connection. InfiniBand is a point-to-point bidirectional serial interconnect, but that probably doesn't say much. To simplify we could describe it as a technology similar to PCI Express, but one that can also be used externally as a network technology like Ethernet - with the difference that this switched networking technology:

Latency sensitive cluster applications that exchange a lot of (synchronizing) data between the nodes are a prime example of where one should use InfiniBand. A good example is Fluent, a Computational Fluid Dynamics (CFD) package. This article, written by Gilad Shainer, a senior technical marketing manager at Mellanox, shows how using low latency InfiniBand offers much better scaling over multiple nodes than Gigabit Ethernet.

As you can see, with more than 32 cores (or 16 dual core Woodcrest CPUs), Gigabit Ethernet severely limits the performance scaling.

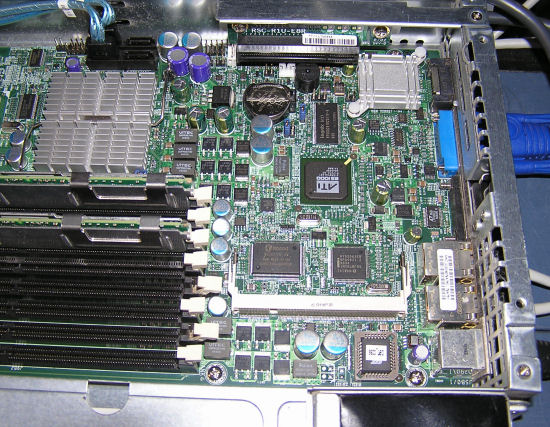

The second PCIe x8 is used as a PCIe slot. PCI-X is not an option due to the limited space available on each X7DB motherboard. So that is one of the limitations of the Twin: there is only one PCIe expansion slot available, which can contain a 2U height card. The PCIe x8 of the second I/O port offers Intel I/O Acceleration Technology (IOAT) to the dual Gigabit Controller. TCP/IP processing is only a small part of the CPU load that is a result of intensive networking activity. Especially with small data blocks, handling the interrupts and signals consumes many more cycles than pure TCP/IP processing.

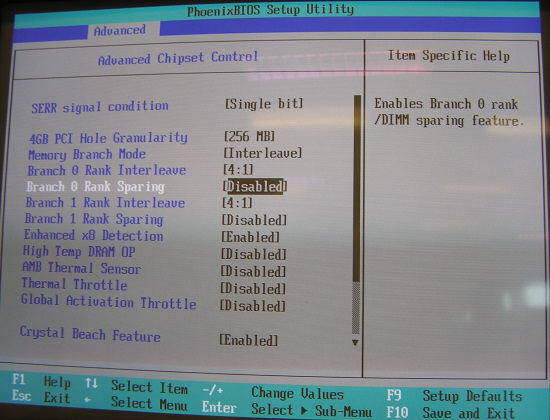

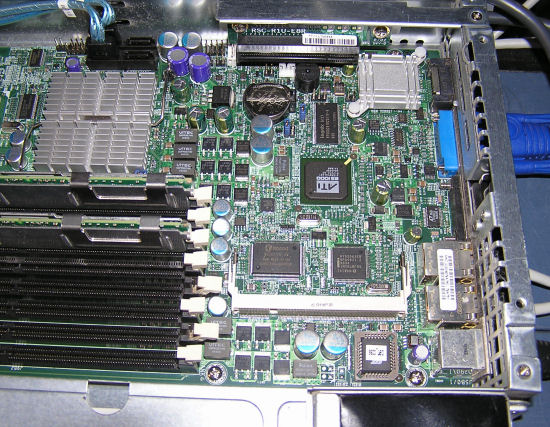

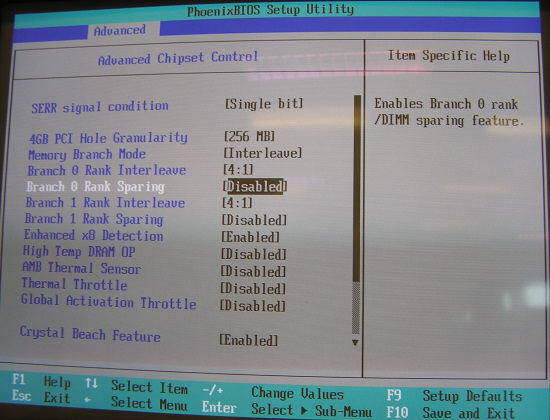

The X7DBT has eight 240-pin DIMM sockets that can support up to 32 GB of ECC FBD (Fully Buffered DIMM) DDR2-667/533 SDRAM and support memory spares.

All memory modules used to populate the system should be the same size, type and speed.

Each board has four SATA connectors, but only two of them are connected to the storage backplane. That means that in reality, you are limited to two SATA disks per node. This is not a real limitation as this server is not intended to be a fileserver or database server. For a front end or HPC cluster node, two SATA disks in RAID-1 should be sufficient.

As you can see, expansion is limited to one half height PCIe card. A result of fitting two nodes in a 1U server.

Two models exist : the 6015T-INF and the 6015T-T. The only difference is that the former has an additional InfiniBand connector. Supermicro sent us the 6015T-INF. The Superserver 6015T-INF consists of two Supermicro X7DBT-INF dual processor server boards. Each board is based on Intel's 5000P chipset and support two Intel 53xx/51xx/50xx series processors. Let's take a look at the scheme below.

The Intel 5000p chipset has two I/O units, one with two x8 PCIe lanes and a second one with an x8 + x4 PCIe lane configuration. The first PCIe x8 connection of the first I/O unit is used to attach the 20 Gbit/s InfiniBand connection to the Northbridge (MCH). PCIe x8 offers 2 GB/s full duplex, which roughly matches the peak data bandwidth of the InfiniBand connection. InfiniBand is a point-to-point bidirectional serial interconnect, but that probably doesn't say much. To simplify we could describe it as a technology similar to PCI Express, but one that can also be used externally as a network technology like Ethernet - with the difference that this switched networking technology:

- offers more than 10 times the bandwidth of Gigabit Ethernet (1500 MB/s vs. 125 MB/s)

- 15 to 40 times lower latency than Gigabit Ethernet (2.25 µs vs. 100 µs)

- at a ten times lower CPU load (5% versus 50%)

Latency sensitive cluster applications that exchange a lot of (synchronizing) data between the nodes are a prime example of where one should use InfiniBand. A good example is Fluent, a Computational Fluid Dynamics (CFD) package. This article, written by Gilad Shainer, a senior technical marketing manager at Mellanox, shows how using low latency InfiniBand offers much better scaling over multiple nodes than Gigabit Ethernet.

As you can see, with more than 32 cores (or 16 dual core Woodcrest CPUs), Gigabit Ethernet severely limits the performance scaling.

The second PCIe x8 is used as a PCIe slot. PCI-X is not an option due to the limited space available on each X7DB motherboard. So that is one of the limitations of the Twin: there is only one PCIe expansion slot available, which can contain a 2U height card. The PCIe x8 of the second I/O port offers Intel I/O Acceleration Technology (IOAT) to the dual Gigabit Controller. TCP/IP processing is only a small part of the CPU load that is a result of intensive networking activity. Especially with small data blocks, handling the interrupts and signals consumes many more cycles than pure TCP/IP processing.

The X7DBT has eight 240-pin DIMM sockets that can support up to 32 GB of ECC FBD (Fully Buffered DIMM) DDR2-667/533 SDRAM and support memory spares.

All memory modules used to populate the system should be the same size, type and speed.

Each board has four SATA connectors, but only two of them are connected to the storage backplane. That means that in reality, you are limited to two SATA disks per node. This is not a real limitation as this server is not intended to be a fileserver or database server. For a front end or HPC cluster node, two SATA disks in RAID-1 should be sufficient.

As you can see, expansion is limited to one half height PCIe card. A result of fitting two nodes in a 1U server.

28 Comments

View All Comments

SurJector - Tuesday, June 5, 2007 - link

I've just reread your article. I'm a little bit surprised by the power:idle load

1 node : 160 213

2 nodes: 271 330

increase: 111 117

There is something wrong: the second node adds only 6W (5.5W counting efficiency) of power consumption ?

Could it be that some power-saving options are not set on the second node (speedstep, or similar) ?

Nice article though, I bet I'd love to have a rack of them for my computing farm. Either that or wait (forever ?) for the Barcelona equivalents (they should have better memory throughput).

Super Nade - Saturday, June 2, 2007 - link

Hi,The PSU is built by Lite-On. I owned the PWS-0056 and it was built like a tank. Truely server grade build quality.

Regards,

Super Nade, OCForums.

VooDooAddict - Tuesday, May 29, 2007 - link

Here are the VMWare ESX issues I see. ... They basically compound the problem.- No Local SAS controller. (Already mentioned)

- No Local SAS requires booting from a SAN. This means you will use your only PCIe slot for a SAN Hardware HBA as ESX can't boot with a software iSCSI.

- Only Dual NICs on board and with the only expansion slot taken up by the SAN HBA (Fiber Channel or iSCSI) you already have a less then ideal ESX solution. --- ESX works best with a dedicated VMotion port, Dedicated Console Port, and at least one dedicated VM port. Using this setup you'll be limited to a dedicated VMotion and a Shared Console and VM Port.

The other issue is of coarse the non redundant power supply. While yes ESX has a High Availability mode where it restarts VMs from downed hardware. It restarts VMs on other hardware, doesn't preserve them. You could very easily loose data.

Then probably the biggest issue ... support. Most companies dropping the coin on ESX server are only going to run it on a supported platform. With supported platforms from the Dell, HP and IBM being comparatively priced and the above issues, I don't see them winning ANY of the ESX server crowd with this unit.

I could however see this as a nice setup for the VMWare (free) Virtual Server crowd using it for virtualized Dev and/or QA environments where low cost is a larger factor then production level uptime.

JohanAnandtech - Wednesday, May 30, 2007 - link

Superb feedback. I feel however that you are a bit too strict on the dedicated ports. A dedicated console port seems a bit exagerated, and as you indicate a shared Console/vmotion seems acceptable to me.DeepThought86 - Monday, May 28, 2007 - link

I thought it interesting to note how poor the scaling was on the web server benchmark when going from 1S to 2S 5345 (107 URL's/s to 164). However the response times scaled quite well.Going from 307 ms to 815 ms (2.65) with only a clockspeed difference of 2.33 cs 1.86 (1.25) is completely unexpected. Since the architecture is the same, how can a 1.25 factor in clock lead to a 2.65 factor in performance? Then I remembered you're varying TWO factors at once making it impossible to compare the numbers.... how dumb is that in benchmark testing??

Honestly, it seems you guys know how to hook up boxes but lack the intelligence to actually select test cases that make sense, not to mention analyse your results in a meaningful way

It's also a pity you guys didn't test with the AMD servers to see how they scaled. But I guess the article is meant to pimp Supermicro and not point out how deficient the Intel system design is when going from 4-cores to 8

JohanAnandtech - Tuesday, May 29, 2007 - link

I would ask you to read my comments again. Webserver performance can not be measured by one single metric unless you can keep response time exactly the same. In that case you could measure throughput. However in the realworld, response time is never the same, and our test simulates real users. The reason for this "superscaling" of responstimes is that the slower configurations have to work with a backlog. Like it or not, but that is what you see on a webserver.

We have done that already here for a number of workloads:

http://www.anandtech.com/cpuchipsets/intel/showdoc...">http://www.anandtech.com/cpuchipsets/intel/showdoc...

This article was about introducing our new benches, and investigating the possibilities of this new supermicro server. Not every article can be an AMD vs Intel article.

And I am sure that 99.9% of the people who will actually buy a supermicro Twin after reading this review, will be very pleased with it as it is an excellent server for it's INTENDED market. So there is nothing wrong with giving it positive comments as long as I show the limitations.

TA152H - Tuesday, May 29, 2007 - link

Johan,I think it's even better than you didn't bring into the AMD/Intel nonsense, because it tends to take focus away from important companies like Supermicro. A lot of people aren't even aware of this company, and it's an extremely important company that makes extraordinary products. Their quality is unmatched, and although they are more expensive, it is excellent to have the option of buying a top quality piece. It's almost laughable, and a bit sad, when people call Asus top quality, or a premium brand. So, if nothing else, you brought an often ignore company into people's minds. Sadly, on a site like this where performance is what is generally measured, if you guys reviewed the motherboards, it would appear to be a mediocre, at best product. So, your type of review helps put things in their proper perspective; they are a very high quality, reliable, innovative company that is often overlooked, but has a very important role in the industry.

Now, having said that (you didn't think I could be exclusively complimentary, did you?), when are you guys going to evaluate Eizo monitors??? I mean, how often can we read articles on junk from Dell and Samsung, et al, wondering what the truly best monitors are like? Most people will buy mid-range to low-end (heck, I still buy Samsung monitors and Epox motherboards sometimes because of price), but I also think most people are curious about how the best is performing anyway. But, let's give you credit where it's due, it was nice seeing Supermicro finally get some attention.

DeepThought86 - Monday, May 28, 2007 - link

Also, looking at your second benchmark I'm baffled how you didn't include a comparison of 1xE5340 vs 2x5340 or 1x5320 vs 2x5320 so we could see scaling. You just have a comparison of Dual vs 2N, where (duh!) the results are similar.Sure, there's 1x5160 vs 2x5160 but since the number of cores is half we can't see if memory performance is a bottleneck. Frankly, if Intel had given you instruction on how to explicitly avoid showing FSB limitations in server application they couldn't have done a better job.

Oh wait, looks like 2 Intel staffers helped on the project! BIG SURPRISE!

yacoub - Monday, May 28, 2007 - link

http://images.anandtech.com/reviews/it/2007/superm...">http://images.anandtech.com/reviews/it/2007/superm...Looks like the top DIMM is not fully seated? :D

MrSpadge - Monday, May 28, 2007 - link

Nice one.. not everyone would catch such a fault :)MrS