The Ampere Altra Review: 2x 80 Cores Arm Server Performance Monster

by Andrei Frumusanu on December 18, 2020 6:00 AM EST- Posted in

- Servers

- Neoverse N1

- Ampere

- Altra

SPECjbb MultiJVM - Java Performance

Moving on from SPECCPU, we shift over to SPECjbb2015. SPECjbb is a from ground-up developed benchmark that aims to cover both Java performance and server-like workloads, from the SPEC website:

“The SPECjbb2015 benchmark is based on the usage model of a worldwide supermarket company with an IT infrastructure that handles a mix of point-of-sale requests, online purchases, and data-mining operations. It exercises Java 7 and higher features, using the latest data formats (XML), communication using compression, and secure messaging.

Performance metrics are provided for both pure throughput and critical throughput under service-level agreements (SLAs), with response times ranging from 10 to 100 milliseconds.”

The important thing to note here is that the workload is of a transactional nature that mostly works on the data-plane, between different Java virtual machines, and thus threads.

We’re using the MultiJVM test method where as all the benchmark components, meaning controller, server and client virtual machines are running on the same physical machine.

The JVM runtime we’re using is OpenJDK 15 on both x86 and Arm platforms, although not exactly the same sub-version, but closest we could get:

Altra system:

openjdk 15.0.1 2020-10-20

OpenJDK Runtime Environment 20.9 (build 15.0.1+9)

OpenJDK 64-Bit Server VM 20.9 (build 15.0.1+9, mixed mode, sharing)

EPYC & Xeon systems:

openjdk 15 2020-09-15

OpenJDK Runtime Environment (build 15+36-Ubuntu-1)

OpenJDK 64-Bit Server VM (build 15+36-Ubuntu-1, mixed mode, sharing)

Furthermore, we’re configuring SPECjbb’s runtime settings with the following configurables:

SPEC_OPTS_C="-Dspecjbb.group.count=$GROUP_COUNT -Dspecjbb.txi.pergroup.count=$TI_JVM_COUNT -Dspecjbb.forkjoin.workers=N -Dspecjbb.forkjoin.workers.Tier1=N -Dspecjbb.forkjoin.workers.Tier2=1 -Dspecjbb.forkjoin.workers.Tier3=16"

Where N=160 for 2S Altra test runs, N=80 for 1S Altra test runs, N=112 for 2S Xeon, N=56 for 1S Xeon, and N=128 for 2S and 1S on the EPYC system. I tried running 256 or 160 threads on the 2S EPYC configuration but the benchmark would error out with a critical timeout and I wasn’t able to fully debug as to why it did that.

In terms of JVM options, we’re limiting ourselves to bare-bone options to keep things simple and straightforward:

Altra & EPYC system:

JAVA_OPTS_C="-server -Xms2g -Xmx2g -Xmn1536m"

JAVA_OPTS_TI="-server -Xms2g -Xmx2g -Xmn1536m"

JAVA_OPTS_BE="-server -Xms48g -Xmx48g -Xmn42g -XX:+AlwaysPreTouch"

Xeon system:

JAVA_OPTS_C="-server -Xms2g -Xmx2g -Xmn1536m"

JAVA_OPTS_TI="-server -Xms2g -Xmx2g -Xmn1536m"

JAVA_OPTS_BE="-server -Xms172g -Xmx172g -Xmn156g -XX:+AlwaysPreTouch"

The reason the Xeon system is running a larger back-end heap is because we’re running a single NUMA node per socket, while for the Altra and EPYC we’re running four NUMA nodes per socket for maximised throughput, meaning for the 2S figures we have 8 backends running for the Altra and EPYC and 2 for the Xeon, and naturally half of those numbers for the 1S benchmarks. The back-ends and transaction injectors are affinitised to their local NUMA node with numactl –cpunodebind and –membind, while the controller is called with –interleave=all.

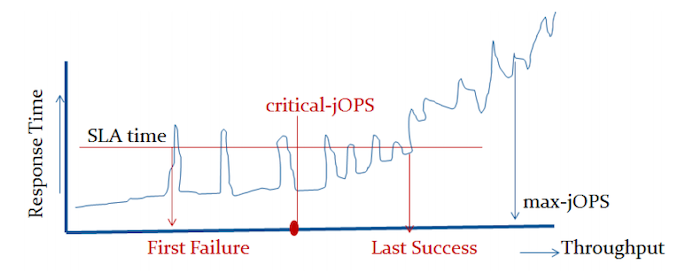

The max-jOPS and critical-jOPS result figures are defined as follows:

"The max-jOPS is the last successful injection rate before the first failing injection rate where the reattempt also fails. For example, if during the RT-curve phase the injection rate of 80000 passes, but the next injection rate of 90000 fails on two successive attempts, then the max-jOPS would be 80000."

"The overall critical-jOPS is computed by taking the geomean of the individual critical-jOPS computed at these five SLA points, namely:

• Critical-jOPSoverall = Geo-mean of (critical-jOPS@ 10ms, 25ms, 50ms, 75ms and 100ms response time SLAs)

During the RT curve building phase the Transaction Injector measures the 99th percentile response times at each step level for all the requests (see section 9) that are considered in the metrics computations. It then computes the Critical-jOPS for each of the above five SLA points using the following formula:

(first * nOver + last * nUnder) / (nOver + nUnder) "

That’s a lot of technicalities to explain an admittedly complex benchmark, but the gist of it is that max-jOPS represents the maximum transaction throughput of a system until further requests fail, and critical-jOPS is an aggregate geomean transaction throughput within several levels of guaranteed response times, essentially different levels of quality of service.

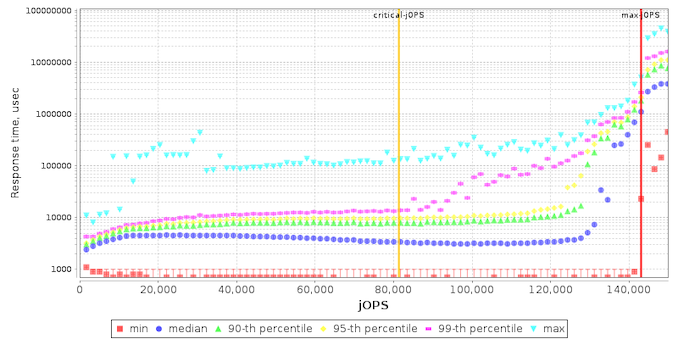

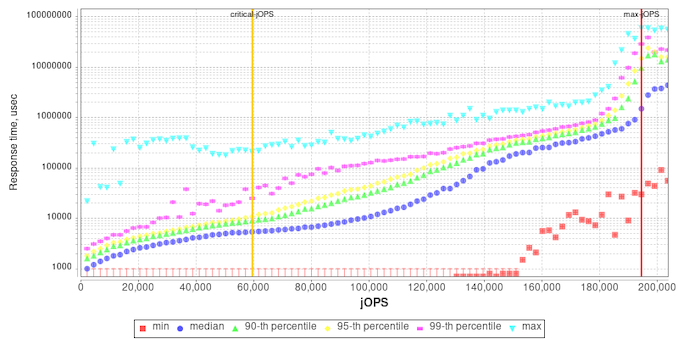

Beyond the result figures, the benchmark keeps detailed track of timings of responses and tracks a few important statistical data-points across a response-time curve, as follows:

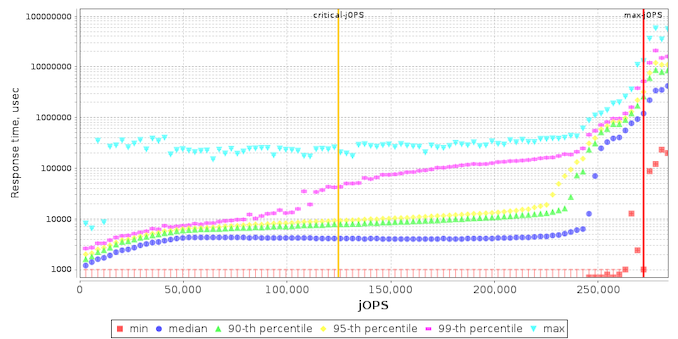

I’m starting off with the EPYC results as they’re sort of standard – the max-jOPS here ends up quite high at over 270k, while the critical-jOPS ends up around 125k. The system still manages to retain 90th percentile response times under 20ms up until 230k which is excellent, with 99th percentile results starting to degrade after 110k jOPS.

On the Xeon system, we see similar flat 90th percentile response times up until around 120k with 99th percentiles starting to degrade following 90k, but in a much tighter curve than on the EPYC system – while the system here has less overall throughput its scaling up to that throughput limit could be considered to be better.

With the EPYC and Xeon systems as context, we’re finally looking at the Altra results, which look very different.

Unlike the x86 systems, 99th and 90th percentile response times degrade earlier on in the throughput curve for the Altra chip. What this actually reminded me of is the STREAM results from earlier in the review where we saw that initially a bunch of cores were able to hit peak bandwidth across the memory controllers, but adding further cores to the mix actually degraded performance, pointing out to suboptimal congestion across the mesh interconnect.

It might be possible that the results here across SPECjbb are hitting a similar level of saturation under load, given that there’s a lot of inter-core communication and memory transactions happening.

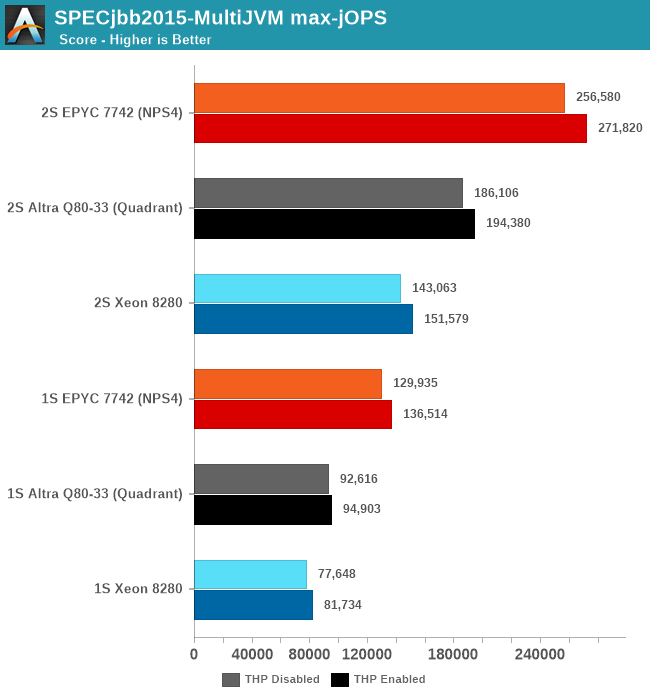

Charting the max-jOPS of the different systems, I ran figures for both 1S and 2S system configurations. Additionally, I also tested out the benchmark both with transparent huge pages always enabled, and to a default not used / madvise state, as we’ve seen in the past that this can have a notable impact on the resulting performance.

Whilst the Altra system is able to beat the Xeon, it’s not sufficient to match the EPYC system which still lies considerably ahead by a good margin. The exact reasons for this discrepancy compared to the x86 systems isn’t immediately clear, as we’re dealing with many layers here. AArch64 OpenJDK JVM performance certainly might not be as mature and optimised as the x86-64 counterparts, and there is certainly a rabbit hole of various optimisations and knobs we could have tried to change things – although we still view these simple default out-of-the-box settings to still be valuable and valid in terms of comparisons.

One thing that did come to mind immediately when I saw the results was SMT. Due to this being a transactional data-plane resident type of workload, SMT will undoubtedly help a lot in terms of performance, so I tested out the EPYC chip figures with SMT disabled, and indeed max-jOPS went down to 209.5k for the 2S THP enabled results, meaning that SMT accounts for a 29.7% performance benefit in this benchmark.

A further indication that the Altra system is being underutilised on the part of the cores and memory-bottlenecked is its power consumption, which even when fully loaded in the RT curve, it generally hovered around 170-180W per socket, while the x86 systems were filling up their TDPs.

It’s generally these kinds of workloads that SMT works best on, and that’s why IBM can deploy SMT4 or SMT8 processors, and the type of workloads Marvell’s ThunderX was trying to carve a niche or itself with SMT4.

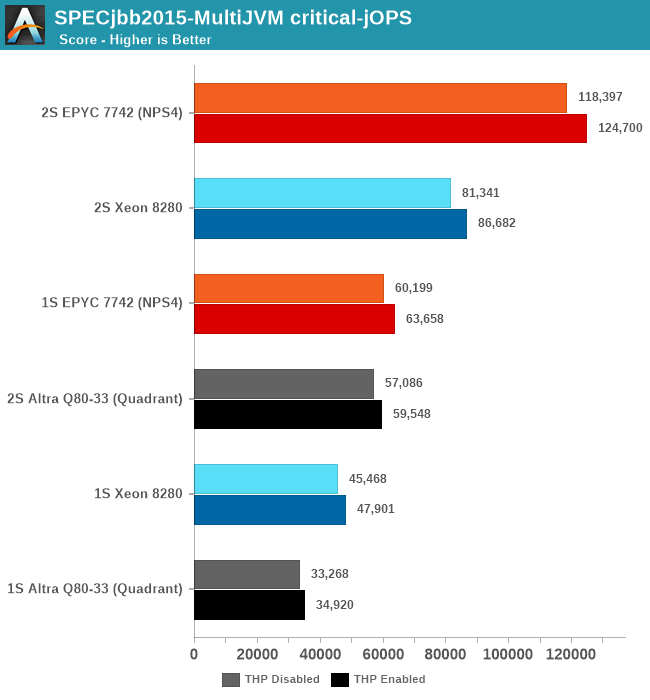

For the critical-jOPS figures, the Altra doesn’t do well at all given its response-time curve. Beyond the lack of SMT (The EPYC here again achieves its high score through a 26.4% contribution of the secondary logical cores), we’re maybe looking at a software side immaturity of out-of-the-box Java performance on Arm systems. The figures here shouldn’t be taken with absolute authority with a conclusion that Java performance on the Altra sucks, but at least we’re seeing signs that it doesn’t look too great.

148 Comments

View All Comments

mostlyfishy - Friday, December 18, 2020 - link

Interesting article thanks. One thing I missed, what process is this on? 7nm?It's also interesting that the M1 has demonstrated that with the right sizings, a very wide backend can give you significant single threaded performance. Not really that useful for a server processor where you're likely to be running many threads and want to trade for more cores though.

Josh128 - Friday, December 18, 2020 - link

Yes, 7nm and monolithic, which seems fairly incredible as this thing is huge. Dont have the die size numbers though. Wonder what the yield is on these...Calin - Friday, December 18, 2020 - link

Maybe there are quite a few more than 80 cores on this beast - in which case you can "eat" some die errors by deactivating cores/complexes/...Wilco1 - Friday, December 18, 2020 - link

Each Neoverse N1 core with 1MB L2 is just 1.4mm^2, so 80 of them add up to 112mm^2. The die size is estimated at about 350mm^2, so tiny compared to the total ~1100mm^2 in EPYC 7742.So performance/area is >3x that of EPYC. Now that is efficiency!

andrewaggb - Friday, December 18, 2020 - link

Timing of this article is awkward. We're comparing to the 18 month old 7742 vs the soon to be released Zen 3 Milan parts which based on the already launched Zen 3 desktop parts (and Milan leaks) will be 9-27% faster in the same power envelope.Cache is a big part of the die size for the AMD chip and the N1 has much less of it which makes the die size smaller. AMD's Desktop IGP parts with way less cache perform very similarly in many workloads to those with the extra cache and the same has been true for intel parts over the years. Some workloads don't benefit much at all from the extra cache and some do which makes choosing the benchmarks more important.

That's not to say the N1 isn't more efficient, but rather that it's hard to make a fair comparison, particularly around die size. They may have similar core counts but have made very different design decisions around cache.

Wilco1 - Friday, December 18, 2020 - link

I don't see how it matters, but Altra is about 9 months old and Neoverse N1 is a sibling of Cortex-A76 which has been available in phones for 2 years. As for Milan, I expect the gain on SPECrate to be about 10-15%. And Milan will be competing with the Altra Max which has 60% more cores which should give ~40% speedup.Yes the design decisions are quite different, and it is interesting that they end up with similar performance despite the disparity in L3 cache. I suspect that 8 memory channels is becoming a limit, and a future generation with DDR5 will enable more throughput (and even more cores).

Gondalf - Friday, December 18, 2020 - link

I am sorry but looking carefully the heatsink and the application of the thermal paste, we are facing a limit of the reticle thing on 7nm.We are in front of a 700/800 mm2 thing. On 7nm this means very few units sold and nearly zero market penetration. Same thing on 5nm given the higher core numbers.

In pratics we have nothing in our hands. Another failure in Server market

Andrei Frumusanu - Friday, December 18, 2020 - link

Ampere is doing Altra Max with 128 cores still on 7nm, so this one certainly isn't near hitting reticle limits.Wilco1 - Friday, December 18, 2020 - link

No it is not anywhere near the reticle limit. You can't estimate the die size from the heatsink, but you estimate it based on similar designs for which we do have numbers. Graviton 2 is a similar design at 30B transistors. This has another 16 cores which adds another 16X1.4 = 22.4mm^2. So around 350mm^2 in N7.milli - Monday, December 21, 2020 - link

This is just a ridiculous statement. 350mm^2 ... no way.Firstly, the die size of Graviton 2 is not known.

A realistic comparison would be AMD's Zen2 chiplet which has 3.9b transistors and is 72mm^2.

One would deduce from that, that Graviton 2 is > 550mm^2. Also your napkin calculation to add 22mm2 is flawed. Firstly, you don't know if a N1 core is actually taking 1.4mm^2 in this CPU. Secondly, you're forgetting to add 64 PCI-E lanes.

Let's say, 25mm2 for the CPU and 25mm2 for the lanes. That would bring the total to 600mm^2. Quite a bit bigger to your 350mm^2.