QLC Goes To 8TB: Samsung 870 QVO and Sabrent Rocket Q 8TB SSDs Reviewed

by Billy Tallis on December 4, 2020 8:00 AM ESTAnandTech Storage Bench - The Destroyer

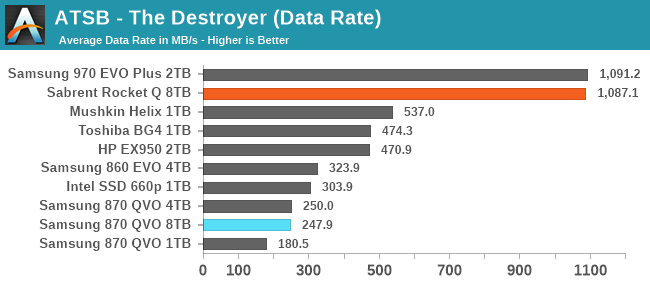

The Destroyer is an extremely long test replicating the access patterns of very IO-intensive desktop usage. A detailed breakdown can be found in this article. Like real-world usage, the drives do get the occasional break that allows for some background garbage collection and flushing caches, but those idle times are limited to 25ms so that it doesn't take all week to run the test. These AnandTech Storage Bench (ATSB) tests do not involve running the actual applications that generated the workloads, so the scores are relatively insensitive to changes in CPU performance and RAM from our new testbed, but the jump to a newer version of Windows and the newer storage drivers can have an impact.

We quantify performance on this test by reporting the drive's average data throughput, the average latency of the I/O operations, and the total energy used by the drive over the course of the test.

|

|||||||||

| Average Data Rate | |||||||||

| Average Latency | Average Read Latency | Average Write Latency | |||||||

| 99th Percentile Latency | 99th Percentile Read Latency | 99th Percentile Write Latency | |||||||

| Energy Usage | |||||||||

The Sabrent Rocket Q turns in shockingly good scores on The Destroyer, matching the Samsung 970 EVO Plus, a high-end TLC SSD. The reason why the decidedly less high-end Rocket Q can do this is due entirely to the extreme capacity. For the first time, we have a drive that can handle The Destroyer entirely in its SLC cache. That means the results here are a bit misleading, as the drive would not be able to sustain this level of performance if it was full enough to reduce the SLC cache capacity down to more typical sizes. Power efficiency is also pretty decent here, but again operating out of the SLC cache helps.

Meanwhile, the 8TB Samsung 870 QVO turns in pretty much the same performance scores as the 4TB model, as expected. However, the 8TB drive is a little bit more power-hungry due to the higher part count.

AnandTech Storage Bench - Heavy

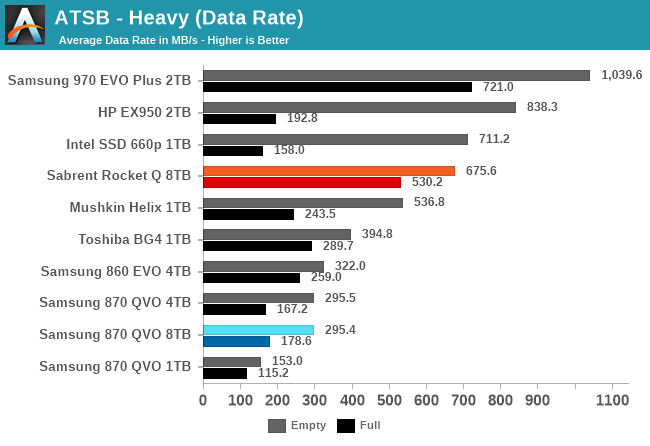

Our Heavy storage benchmark is proportionally more write-heavy than The Destroyer, but much shorter overall. The total writes in the Heavy test aren't enough to fill the drive, so performance never drops down to steady state. This test is far more representative of a power user's day to day usage, and is heavily influenced by the drive's peak performance. The Heavy workload test details can be found here. This test is run twice, once on a freshly erased drive and once after filling the drive with sequential writes.

|

|||||||||

| Average Data Rate | |||||||||

| Average Latency | Average Read Latency | Average Write Latency | |||||||

| 99th Percentile Latency | 99th Percentile Read Latency | 99th Percentile Write Latency | |||||||

| Energy Usage | |||||||||

The Heavy test doesn't allow the Sabrent Rocket Q a unique advantage from its massive SLC cache; the smaller high-end NVMe drives can also make good use of their caches and overtake the Rocket Q's performance. However, it does appear that the sheer capacity of the 8TB Rocket Q continues to help significantly on the full-drive test runs. We haven't measured it directly, but I suspect the minimum SLC cache size reached when the drive is full is still quite a bit larger than what the 2TB and smaller drives have to work with, and that's how the Rocket Q avoids the horrible latency spikes that the other QLC drives suffer from.

As on The Destroyer, the 8TB Samsung 870 QVO shows no major differences in performance or efficiency from the 4TB model, which means it's still clearly a bit on the slow side even by SATA standards—especially when full.

AnandTech Storage Bench - Light

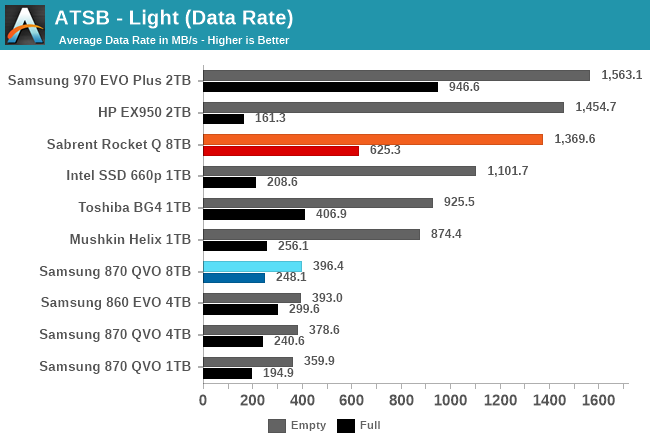

Our Light storage test has relatively more sequential accesses and lower queue depths than The Destroyer or the Heavy test, and it's by far the shortest test overall. It's based largely on applications that aren't highly dependent on storage performance, so this is a test more of application launch times and file load times. This test can be seen as the sum of all the little delays in daily usage, but with the idle times trimmed to 25ms it takes less than half an hour to run. Details of the Light test can be found here. As with the ATSB Heavy test, this test is run with the drive both freshly erased and empty, and after filling the drive with sequential writes.

|

|||||||||

| Average Data Rate | |||||||||

| Average Latency | Average Read Latency | Average Write Latency | |||||||

| 99th Percentile Latency | 99th Percentile Read Latency | 99th Percentile Write Latency | |||||||

| Energy Usage | |||||||||

The 8TB Sabrent Rocket Q offers decent performance on the Light test, even when full: it still provides a large enough SLC cache to handle all the writes from this test. A lot of smaller drives (using QLC or TLC) can't manage that and show greatly increased write latency on the full-drive test runs.

The 8TB Samsung 870 QVO shows slightly improved latency scores on the full-drive test run compared to the 4TB model, but otherwise performance is the same as expected. As usual, the 8TB QVO is a bit more power-hungry than the smaller versions, and the Rocket Q is considerably more power-hungry than the smaller low-end NVMe drives.

150 Comments

View All Comments

Great_Scott - Sunday, December 6, 2020 - link

QLC remains terrible and the price delta between the worst and good drives remains $5.The most interesting part of this review is how insanely good the performance of the DRAMless Mushkin drive is.

ksec - Friday, December 4, 2020 - link

I really wish a segment of market move towards high capacity and low speed like QVO This is going to be useful for like NAS, where the speed is limited to 1Gbps or 2.5Gbps Ethernet.The cheapest SSD I saw for 2TB was a one off deal from Sandisk at $159. I wonder when we could see that being the norm if not even lower.

Oxford Guy - Friday, December 4, 2020 - link

I wish QLC wouldn't be pushed on us because it ruins the economy of scale for 3D TLC. 3D TLC drives could have been offered in better capacities but QLC is attractive to manufacturers for margin. Too bad for us that it has so many drawbacks.SirMaster - Friday, December 4, 2020 - link

People said the same thing when they moved from SLC to MLC, and again from MLC to TLC.emn13 - Saturday, December 5, 2020 - link

There is an issue of decreasing returns, however.SLC -> MLC allowed for 2x capacity (minus some overhead) I don't remember anybody gnashing their teeth to much at that.

MLC -> TLC allowed for 1.5x capacity (minus some overhead). That's not a bad deal, but it's not as impressive anymore.

TLC -> QLC allows for 1.33x capacity (minor some overhead). That's starting to get pretty slim pickings.

Would you rather have a 4TB QLC drive, or a 3TB TLC drive? that's the trade-off - and I wish sites would benchmark drives at higher fill rates, so it'd be easier to see more real-world performance.

at_clucks - Friday, December 11, 2020 - link

@SirMaster, "People said the same thing when they moved from SLC to MLC, and again from MLC to TLC."You know you're allowed to change your mind and say no, right? Especially since some transitions can be acceptable, and others less so.

The biggest thing you're missing is that the theoretical difference between TLC and QLC is bigger than the difference between SLC and TLC. Where SLC hasto discriminate between 2 levels of charge, TLC has to discriminate between 8, and QLC between 16.

Doesn't this sound like a "you were ok with me kissing you so you definitely want the D"? When TheinsanegamerN insists ATers are "techies" and they "understand technology" I'll have this comment to refer him to.

magreen - Friday, December 4, 2020 - link

Why is that useful for NAS? A hard drive will saturate that network interface.RealBeast - Friday, December 4, 2020 - link

Yup, my eight drive RAID 6 runs about 750MB/sec for large sequential transters over SFP+ to my backup array. No need for SSDs and I certainly couldn't afford them -- the 14TB enterprise SAS drives I got were only $250 each in the early summer.nagi603 - Friday, December 4, 2020 - link

Not if it's a 10G linkleexgx - Saturday, December 5, 2020 - link

If you have enough drives in RAID6 you can come close to saturate a 10gb link (read post above 750MB/s with 8 hdds in RAID6)