Arm Announces Neoverse N1 & E1 Platforms & CPUs: Enabling A Huge Jump In Infrastructure Performance

by Andrei Frumusanu on February 20, 2019 9:00 AM ESTN1 Hyperscale Reference Design

A big part of what is defining the N1 Platform as an actual platform, is the fact that Arm is offering a full reference design with a set of IPs that is fully validated by Arm themselves.

Here we see three reference designs, a Neoverse N1 hyperscale design, which we’ll get into more detail shortly, an N1 edge design, and a Neoverse E1 edge design. Arm’s goals with the reference designs is to give vendors “sweet-spot” configuration options that they will then be able to implement with (relatively) minimal effort.

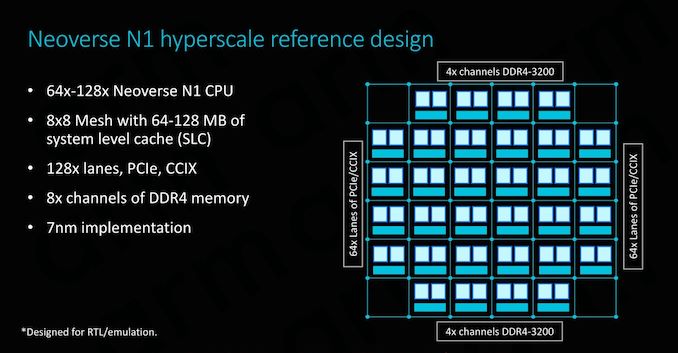

The N1 hyperscale design is what we’ll be covering in more detail as this represents Arm’s most cutting-edge and competitive product.

As covered on the previous page, at the heart we find the Neoverse N1 CPU in either 64 or 128 core configurations, integrated in a CMN-600 mesh network with either 64 or 128MB of SLC cache. We also see 128 lanes for PCIe 4 respectively CCIX interfaces which provide plenty of I/O bandwidth.

In terms of memory controllers, Arm employs 8x DD4 interfaces up to 3200MHz. Arm actually has abandoned development of its own memory controllers as customers in most cases opted for their own in-house designs or rather opted to choose IP from other third-party vendors such as Cadence or Synopsys. For the current reference designs Arm’s own DMC-520 was still up-to-date and a well-understood block for the company, although in the future newer memory controllers such as for DDR5 will have to rely on third-party IP. Naturally, the reference design targets the latest 7nm process node.

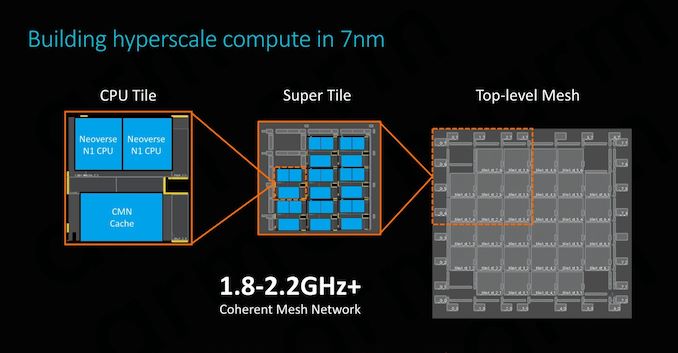

The physical implementation of the SoC would use replicable hierarchical building blocks for ease of design. A “CPU Tile” consists of the two N1 CPU cores, a slice/bank of the SLC cache as well as part of the CMN’s cross points and home-nodes. This CPU Tile is replicated to generate a “Super Tile”, what is added here is peripheral parts of the SoC such as I/O as well as memory controllers. Finally, replicating the super tile in flipped and mirrored implementations results in the final top-level mesh that is to be implemented on the SoC.

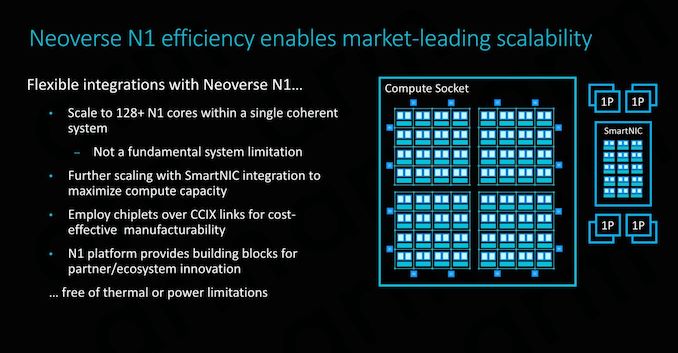

Scaling the design to 128 cores doesn’t represent an issue for the IP, although we’ll be hitting some practical limits in terms of current generation technology. Arm’s 64 core N1 reference design with 64MB of cache on a 7nm process node would result in a die size a little under 400mm², which probably is on the higher end of what vendors would want to target in terms of manufacturability. To alleviate such concerns, Arm also took a page out of AMD’s book and floated the idea of chiplet designs, where each chiplet would communicate over CCIX links. Inherently it’s up to the vendor to decide how they’ll want to design their solution, and Arm provides the essential building blocks and flexibility to enable this.

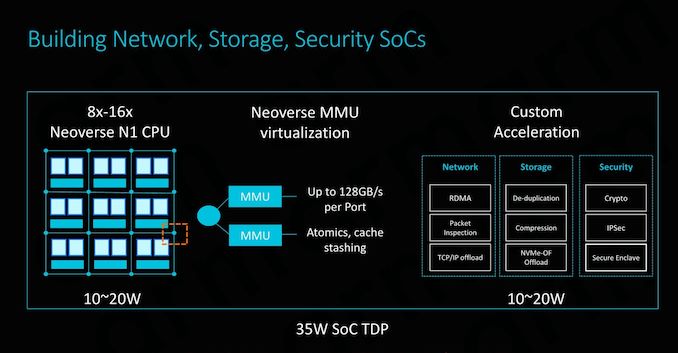

SmartNIC integration capability is also an important aspect of the design and its flexibility. To maximise compute capacity in large scale system, having accelerated network connectivity is key in actually achieving high throughput in the densest (and efficient) form-factor possible.

The CMN-600 allows for slave ports on its crosspoints: Here we can see MMUs connected with high bandwidth interfaces of up to 128GB/s. Attaching fixed-function hardware offloading IP thus would be extremely easy to implement.

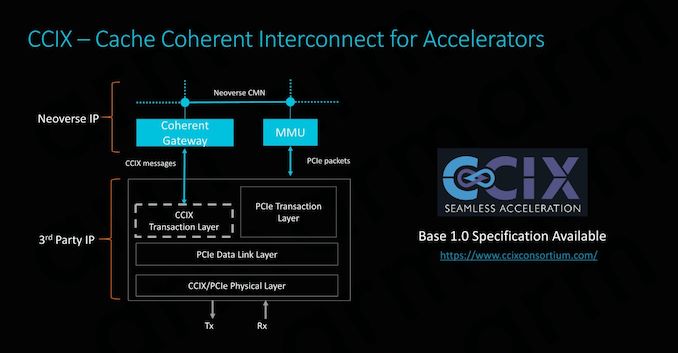

CCIX is extremely important for Arm as it enables its product portfolio to integrate with third-party IP offerings. Enabling cache coherency for external IP blocks is an incredibly attractive feature to have as it massively simplifies software design for the vendors. Essentially what this means is that software simply sees a single huge block of memory, whereas non-coherent systems require drivers and software to be aware and track what part of memory is valid and what isn’t. In terms of IP integration, Arm provides the CCIX coherent gateway that integrates with the CMN-600, while on the other side it’s the onus of the third-party IP provider to provide the CCIX translation layer.

Currently Xilinx will be among the first vendors to offer CCIX-enabled end-products in Q3 2019. With AMD also fully embracing CCIX, there’s some very exciting future potential for third-party accelerator hardware, and we be seeing new use-cases that just weren’t feasible before.

Power/Performance management

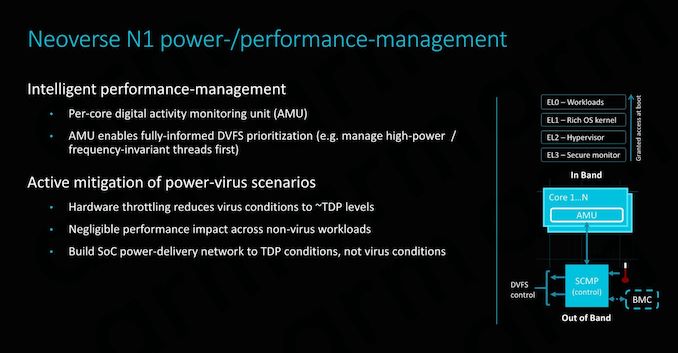

While it’s a bit weird to talk about power management in the context of implementation scalability (The average reader might think of it as a thermal/cooling consideration), there’s some very interesting implications in terms of how Arm simplifies the work needed to be done by the vendor.

Along a chip’s logical design, a vendor must also implement a power delivery network that will be able to adequately support the IP. In real-world use-cases this means that the PDN needs to be as robust as to deal with the worst-case power scenario of a component. This is actually quite a headache for many vendors as the design requires complex models and in most cases the PDN will need to be over-engineered in order to offer guarantees of stability, which in turn raises the complexity and cost of the implementation.

Arm seeks to alleviate these concerns by offering extremely fine-grained DVFS mechanisms in the form of a dedicated micro-controller. The controller access detailed activity monitoring units inside the CPU cores, seeing what actual blocks and how many transistors are actually actively switching, and feeding this information back to the system controller to change DVFS states. This provides a certain level of hard-guarantee as to when the CPU enters power-virus-like workloads which can cause current spikes, and avoid them in time. This enables vendors to design their PDNs to more conservative tolerances, saving on implementation cost.

101 Comments

View All Comments

wumpus - Thursday, February 21, 2019 - link

This was supposed to be a reply to "X86 is less efficient than RISC".Neutral - Wednesday, February 20, 2019 - link

"All of these new microarchitectures are important to Arm because they represent an infliction point in the market"...Dictionary result for infliction

/inˈflikSHən/

noun

the action of inflicting something unpleasant or painful on someone or something.

Ryan Smith - Wednesday, February 20, 2019 - link

Whoops, there's one heck of a typo. Thanks!phoenix_rizzen - Thursday, February 21, 2019 - link

There's several other typos, incorrect word usage, and "correctly spelled but incorrect word" errors in this article.Excellent article with a *lot* of great information. But a lot of niggles that should have been picked up by an editor before it was published.

GreenReaper - Wednesday, February 20, 2019 - link

They're bringing *pain* to the competition! :-DAntony Newman - Wednesday, February 20, 2019 - link

Ryan : I think I counted several (6?) typos - a spellcheck will pick up most of them (there was also a DDR related one with a missing letter).AJ

eastcoast_pete - Wednesday, February 20, 2019 - link

@Andrei: Thanks! Sounds very promising, I look forward to ARM-based solutions giving Intel (and AMD) some competitive pressure in the server space, now that SPARC and Power are either down or almost out of this market. Question: Any mention of Microsoft server-type applications being ported to run native on ARM64/Neoverse? That would open a large market for Neoverse and following designs.Other comments: So, did Qualcomm get out of the server market exactly at the wrong time? Sure looks that way. Huawei played it smart, using its development and know-how from the mobile space to make itself a serious contender for ARM-based servers (China's push to have non-US solutions for their home markets doesn't hurt them, either).

The big technical questions for me are: What will the performance and energy use of the CMN-600 mesh network and CCIX be? AMD is trying its chiplet approach, but their first generation had high energy consumption and some performance degradation by the interconnect, which still has them at a disadvantage to Intel's all cores on one die approach. However, scaling up becomes prohibitive as the die gets bigger and transistor counts get higher. I see the interconnect tech as the next big thing for servers and HPC. If they (ARM) and partners can pull this off and be the first with a high-performance, energy-efficient interconnect, they can clobber Intel or AMD just by combining more and more cheap, high-performing cores using chiplets, and do so at a much lower per-core and per-performance cost.

SarahKerrigan - Wednesday, February 20, 2019 - link

Power is not even remotely out of the server market. IBM sells billions of dollars of servers per year, and the top two systems on Top500 are Power.eastcoast_pete - Wednesday, February 20, 2019 - link

Didn't say that Power is completely out of the server market; SPARC is, thanks to Larry. However, while Power is big in HPC and Supercomputing, it's become rare these days to find a Power-based unit in a server closet or rack for more general use, especially outside of large enterprises. IBM also sells quite a number of Intel-based servers, and I believe that accounts for a sizeable chunk of their remaining server business.SarahKerrigan - Wednesday, February 20, 2019 - link

That's not accurate. IBM offloaded their x86 server business (to Lenovo) in 2014. At this point, it is just z mainframes and Power.My company owns a pair of Power systems, one single-socket and one two-socket, and we're pretty small. It's not just large enterprises. OpenPower has brought down entry costs significantly.