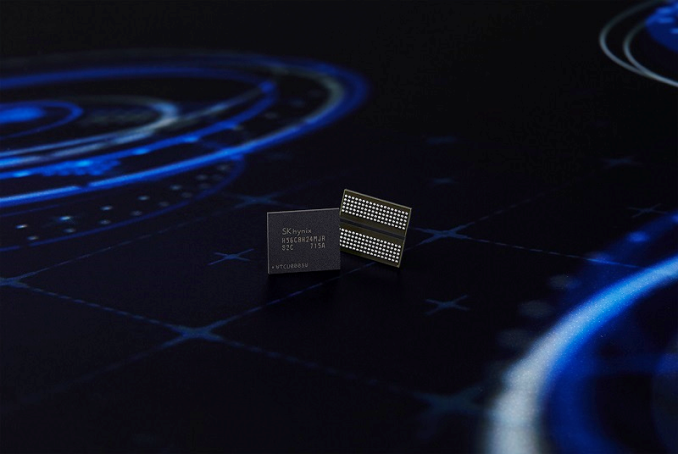

SK Hynix to Ship GDDR6 Memory for Graphics Cards by Early 2018

by Anton Shilov on April 30, 2017 11:00 AM EST

In a surprising move, SK Hynix has announced its first memory chips based on the yet-unpublished GDDR6 standard. The new DRAM devices for video cards have capacity of 8 Gb and run at 16 Gbps per pin data rate, which is significantly higher than both standard GDDR5 and Micron's unique GDDR5X format. SK Hynix plans to produce its GDDR6 ICs in volume by early 2018.

GDDR5 memory has been used for top-of-the-range video cards for over seven years, since summer 2008 to present. Throughout its active lifespan, GDDR5 increased its data rate by over two times, from 3.6 Gbps to 9 Gbps, whereas its per chip capacities increased by 16 times from 512 Mb to 8 Gb. In fact, numerous high-end graphics cards, such as NVIDIA’s GeForce GTX 1060 and 1070, still rely on the GDDR5 technology, which is not going anywhere even after the launch of Micron's GDDR5X with up to 12 Gbps data rate per pin in 2016. As it appears, GDDR6 will be used for high-end graphics cards starting in 2018, just two years after the introduction of GDDR5X.

SK Hynix is not disclosing too many details about its GDDR6 chips, but they have revealed that the chips are 8 Gb devices with 16 Gbps data transfer rate, which in turn are being manufactured on SK Hynix's 21 nm process technology. The company is also stating that GDDR6 will have a 10% lower operating voltage than GDDR5, though they don't specify if that's relative to the low voltage (1.35 V), standard (1.5 V) or high frequency (1.55 V) version of GDDR5.

What is noteworthy is that SK Hynix does disclose some details about the first graphics cards to use its GDDR6 memory. As it appears, that adapter will have a 384-bit memory bus and will thus support memory bandwidth upwards of 768 GB/s. Given the number of chips required for a 384-bit memory sub-system, it is logical to assume that the card will carry 12 GB of memory. SK Hynix is not disclosing the name of its partner among GPU developers, but it is logical to assume that we are talking a high-end product that will replace an existing graphics card.

Unlike GDDR5X, GDDR6 is expected to be manufactured by all three major DRAM makers, and consequently should be available more widely. SK Hynix believes that GDDR6 will supplant both GDDR5 and GDDR5X relatively quickly. Nonetheless, keep in mind that while it took GDDR5 a relatively short amount of time to replace GDDR4 on high-end graphics cards in 2008 – 2009, it then took the memory standard years to replace GDDR3 on mainstream adapters.

Source: SK Hynix

28 Comments

View All Comments

yhselp - Monday, May 1, 2017 - link

Sounds a lot like NVIDIA, and possibly AMD as well, has decided against HBM for GeForce, which is a shame.MrSpadge - Monday, May 1, 2017 - link

No, they'll use it when it makes sense. For consumer cards GDDR5/X/6 can provide enough bandwidth without major additional costs. I'm sure they will continue to use HBM or some later form of it at least for the highest end chips, where- the additional cost is no problem

- the additional bandwidth is actually needed or helpful

- the improved power efficiency allows them to fit more performance into the highest TDP bin (250 W)

yhselp - Tuesday, May 2, 2017 - link

If they were to pass those cost saving onto the consumer, I'd be okay with it, but since they're charging ludicrous premiums - no amount of inflation or R&D can account for the spike from $250 in 2011 (GF114, 360 mm2) to $600 in 2016 (GP104, 314 mm2) for a video card based on a medium-sized GPU. The same goes for the entire lineup; not to mention the delay of the real flagship based on the large-sized GPU that used to come out at the beginning of every cycle.So not only are they super greedy, seeking enterprise margins from consumers, but they wouldn't even release the latest technologies, and we're okay with that? Not cool.

TheJian - Wednesday, May 3, 2017 - link

ROFL. It's taken Nvidia 10 YEARS to get back to 2007 profits. BTW, the job of EVERY company is to make as much money as possible. The goal of any company is also to price products as high as the market will take. PERIOD. Failing that, you end up like AMD. LOSS after loss, year after year.Note each shrink is now becoming VERY costly to make, and gpu sales are WAY down from their highs years ago. With much less market to fight over, dwindling PC sales for ages, R&D costs exploding, die shrinks exploding, etc you should expect prices to go up on the latest tech. We used to sell ~390mil PC's a year, and now that's down to ~300mil. Down again this Q. That's a quarter of the market dead. Thank god gaming PC's have taken off or you'd really be getting sticker shock from GPU's.

Newsflash genius, NV only has 58% margins and most of that comes from cards in the pro end where margins are really high. Also note, the gpu is not $600, that's for the whole card. NV/AMD don't walk off with hundreds of dollars on your typical gamer cards. On top of the shrinking market here, Nvidia for example went from 698mil on R&D in 2008 to 1.5B R&D yearly now (and it keeps going up). So give it a rest, or go get a better job if you can't afford new toys. You get an amazing amount of performance from all sides (AMD, Intel, NV) on PC's today for the money.

My first PC when I was a kid (ok, apple //e, whatever, it was a PC) cost my parents over $3K (gave up a vacation for it) and wasn't even a multi-color monitor at 13in...LOL. Today I can get a pretty great PC for gaming with a huge monitor etc for under a grand easy. You choose what you buy. Nobody is forcing you to buy 1080ti's etc. Be thankful some people are buying at ridiculous prices so the majority of us can get some really great tech at reasonable pricing. You complain about higher end stuff but seem to fail to realize those sales are exactly what pays for a really great mid-range everything (cpu, gpu, ssd's etc etc).

AMD had better start getting more "greedy" or they're dead. The just had a full quarter's worth of ryzen sales (brand new cpu tech) and couldn't make a profit and margins are a paltry 34%. Vega is going to have a real challenge with 1080ti etc (volta can come out if NV really wants for xmas) so I don't expect that to help. The only thing AMD has coming that will pull in some real margins is 16 core and up for desktop (HEDT whenever they hit) and server. I really hope they told MSFT/Sony we now want 25% margins period on consoles or go fly a kite. The single digit margins they started with on xbox1/ps4 was stupid and should have been passed on like NV did (not worth blowing R&D on that, when it should be spent on CORE tech Jen said...He was right). To date I haven't heard AMD made it above 15% (they said mid teens last I heard) margins on consoles and sales are nowhere near enough to be worth costing them the cpu race, and basically the gpu race for ages now. Not saying they have a bad gpu, just that NV always has something better (either in watts or perf or both), which gives them PRICING power. Same for AMD and Intel cpus. That said I can't wait for AMD's server stuff as I think that is the real cash cow that can get them back to profits for a few years perhaps and maybe kill some debt (badly needed). If Vega really is good, I REALLY hope they price it accordingly and get every dollar they can from it. If it BEATS a 1080ti in more games than it loses, price it like or better than 1080ti! No favors, no discounts. Quit the stupid price war that you can't win with NV. Perf should be there, it's just a question of drivers and watts IMHO. If they are close to NV watts, and charge accordingly I don't expect NV to be price cutting. Jen has said for years he wishes they'd stop their stupid pricing. Intel on the other hand never avoids a price war with AMD, so better to price right on gpus where NV would rather NOT have one.

Don't get me wrong I love a great price too, but AMD can't afford any favors. I have zero complaints about NV pricing either. Whatever price I decide to pay for my next product (from either side) you get a great deal. It's up to you how good that bargain is. Is that last 10% perf worth it or not? It all depends on your wallet I guess. Or do you draw that line at the last 20%? Whatever, same story. Great 1080p gaming can be had by even poor people today. Above that YMMV and you get what you pay for for the most part. Steam and GOG can fill a poor person's HD yearly with entertainment to run on that PC. Dirt poor? Don't buy those games until they're "last years" games or even further back. It's not hard to have a great time at a decent price (hardware or games). Again, enhance your paycheck if you can't afford what you want. It's not their job to fill your hearts desires at prices you love. :)

fanofanand - Monday, May 1, 2017 - link

I would assume it will be AMD, Nvidia rarely takes a risk with new tech. This will be nice for midrange and lower-end cards when the true high-end are all HBM.iwod - Monday, May 1, 2017 - link

The amount of bandwidth required pretty much scales linearly with resolution and GPU die space. The more GPU unit, the more data are required to feed them.The current state of the art 14nm GPU already approaches these memory bandwidth @ 384Bit for GDDR5X. GDDR6 basically allows Nvidia to scale the Titan XP to 7nm ( TSMC 10nm is a short node literally made for Apple only )

Again delaying HBM2 appearance.

So GDDR6 will be cheaper then HBM2, provide much of the bandwidth needed for Next Gen GPU, and less complication / changes to GPU design. Apart from lower Watt/ bandwidth, what is the advantage of HBM? I am pretty sure those using 250W GPU dont care the extra 10 or 20W power.

Haawser - Thursday, June 8, 2017 - link

Pseudo channels that can do simultaneous R/W, lower tEAW, temp based refresh, smaller packaging, optional ECC, to name a few.In comparison, faster GDDRX is a bit like putting a new shade of lipstick on a Pig. Different, but not really much more attractive.

blaktron - Monday, January 15, 2018 - link

Small quibble, GDDR5 replaces GDDR3 not 4. Almost everyone skipped 4.Funny how quickly we forget our history.